- Wed 06 May 2026

- 20 min read

- Linux

- #ansible, #execution-environments, #ansible-builder, #ansible-navigator, #containers, #podman, #automation, #rhel

Table of Contents

- Table of Contents

- What an Execution Environment Actually Contains

- Why Containerize Ansible at All

- The Pieces

- A Worked Example: An EE for Homelab Automation

- ansible-navigator: Running Plays Against the EE

- CI: Pinning the Image, Not the Tool

- Updating an EE

- Trade-offs and When It’s Overkill

- Summary

- Common First-Time Pitfalls

- References

If you’ve run Ansible for any non-trivial length of time, you’ve hit the control-node problem. A laptop accumulates a ~/.ansible/collections/ tree, a venv with a dozen Python libraries pinned to whatever pip install gave you that afternoon, and a handful of distro packages (sshpass, git, sometimes kerberos headers) that you only remember are there when a teammate clones the repo and the same playbook fails for them. CI papers over part of that with a fresh container per job, but only as long as you keep the install steps in lock-step with what the laptops are running, which is where it usually falls apart.

Execution Environments are the answer the Ansible project converged on for that whole class of problem. An Execution Environment (EE) is a container image that bundles ansible-core, ansible-runner, your collections, your Python dependencies, and any system packages you need into a single artifact with a tag you can pin. ansible-builder is the tool that produces it from a declarative definition; ansible-navigator is what you run instead of ansible-playbook so the playbook executes inside that image. The same image is what AAP and AWX use natively, so the “works on my laptop” problem and the “works in dev but not in production” problem collapse into the same problem and get solved once.

This article is the practical walkthrough I’d give a teammate who’s just been told “we’re moving to EEs”. What they are, why you’d want one, how the two tools fit together, and the gotchas that aren’t obvious from the docs.

What an Execution Environment Actually Contains

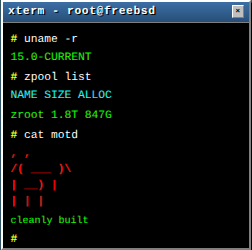

Strip the marketing away and an EE is a fairly thin OCI image. Looking inside one of mine:

/usr/bin/ansible-playbook # ansible-core CLI (rarely called directly in EE workflows)

/usr/bin/ansible-runner # ansible-runner, the process supervisor

/usr/share/ansible/collections/ # collections installed at build time

/usr/lib/python3.11/site-packages # Python deps (requests, kubernetes, etc.)

/usr/bin/git, /usr/bin/sshpass # system packages

That’s it. There’s no daemon, no Ansible “engine” beyond the standard tooling, and no special runtime. When ansible-navigator invokes the image, it bind-mounts your project directory and your inventory in, runs ansible-runner inside the container, streams stdout back, and exits. The image is the artifact; the tools around it are scaffolding.

The Python version is part of the contract too. If your collection only works on 3.11, the EE is where you lock that in, not a comment in the README that hopes everyone is paying attention.

Two things follow from that:

- You own the image. Whatever your playbooks need (a specific

kubernetesPython client version, thecommunity.cryptocollection at a specific tag,opensslfor ad-hoc certificate generation in a play) gets baked in once and shipped as a unit. - The image is the contract. Pin the image tag in CI, in AAP, and in your local

ansible-navigator.ymland you’ve eliminated most of the version-drift surface area between environments.

Why Containerize Ansible at All

A reasonable objection: “I already use a venv and a requirements.txt, why go further?” A venv solves Python drift. It does nothing for system packages, and only partially solves collection drift. An EE is the first point where all three are versioned together. Three reasons that show up in practice.

Collections drag in Python. A modern Ansible playbook isn’t just YAML calling SSH. The kubernetes.core collection wants kubernetes, PyYAML, and openshift. The community.crypto collection wants cryptography at a version that does or doesn’t have a particular API. The containers.podman collection (covered in the Mastodon greeter article) wants requests. Multiply that across a real fleet’s worth of collections and “I’ll just pip install what I need” becomes a coordination problem. Bake it into an image and the coordination is a Git diff.

System packages exist. community.crypto shells out to openssl for some operations. Anything touching IPMI wants ipmitool. community.general.kerberos_ticket wants the MIT Kerberos client libraries. Putting those into a venv is impossible; putting them into an EE is one line in a build manifest.

AAP/AWX speak EEs natively. If you ship work to a controller, the controller already runs your playbooks inside an EE; the only question is whose. Either you produce it (and your laptop runs the same image you deploy) or Red Hat’s default does (and your laptop drifts from production). The first option is strictly better and not measurably more work once you’ve done it once.

The flip side, which I’ll come back to under trade-offs: an EE adds a container start to every play, which is on the order of hundreds of milliseconds. For interactive ad-hoc work it’s noticeable. For anything reviewed and merged, it’s free.

The Pieces

Three tools, each with a specific job:

ansible-builderconsumes anexecution-environment.ymldefinition and produces a container image. It’s a Python package; on the host it relies onpodmanto do the actual building.ansible-navigatoris the local replacement foransible-playbook. It runs your playbook inside a chosen EE image, with either a TUI or plain stdout. It also wrapsansible-doc,ansible-config, and a few inspection commands so you can poke at collections inside the image without dropping into a shell.ansible-runnerlives inside the EE image and is the process that actually drives a play. You rarely interact with it directly;ansible-navigatorcalls it for you, and AAP/AWX do the same on the controller side.

A practical mental model: ansible-builder is podman build for Ansible (with a generated Containerfile you don’t have to maintain yourself), ansible-navigator is podman run for Ansible, and ansible-runner is the entrypoint inside the container that does the work.

A Worked Example: An EE for Homelab Automation

To make this concrete, here’s the EE I use for the small fleet that runs the mastogreet bot, the Quadlet-managed Forgejo from the original Quadlet article, and a handful of other RHEL boxes. It’s not large; that’s the point.

The directory layout:

ansible-ee-homelab/

├── execution-environment.yml

├── requirements.yml # Galaxy collections

├── requirements.txt # Python packages

└── bindep.txt # system packages

execution-environment.yml

---

version: 3

images:

base_image:

name: quay.io/centos/centos:stream9

dependencies:

ansible_core:

package_pip: ansible-core>=2.17,<2.18

ansible_runner:

package_pip: ansible-runner

galaxy: requirements.yml

python: requirements.txt

system: bindep.txt

additional_build_steps:

append_final:

- LABEL org.opencontainers.image.source="https://git.linuxserver.pro/chris/ansible-ee-homelab"

- LABEL org.opencontainers.image.description="Homelab Ansible EE: containers.podman, community.general, ansible.posix"

A few things worth pointing out:

version: 3is the current schema (introduced withansible-builder3.x). v1 is gone, v2 still works but lacks the per-step granularity v3 gives you. New EEs should always be v3.base_image. CentOS Stream 9 here because it tracks the same RHEL minor that my hosts run, which keeps the system-package versions inside the EE close to what’s actually deployed. Red Hat’sregistry.redhat.io/ansible-automation-platform-25/ee-minimal-rhel9is the supported equivalent if you have a subscription; the Ansible community publishesquay.io/ansible/community-ee-minimalandcommunity-ee-baseas smaller starting points if you want a curated base. All three work. I use the CentOS Stream image because the Stream-better-than-old-CentOS reasoning applies here too: a stable RHEL-equivalent base without a subscription requirement.ansible_corepinned to a minor range.>=2.17,<2.18means I get patch updates within the line but not surprise behaviour changes from a major bump. Pin tighter (==2.17.4) if you have AAP version constraints to match.additional_build_stepsis the escape hatch. Anything you’d put into aContainerfile(LABEL, RUN, ENV) goes here, scoped by stage (prepend_base,append_base,prepend_galaxy,append_galaxy,prepend_final,append_final). The v3 schema’s stage granularity is the main reason to be on it.

requirements.yml

---

collections:

- name: containers.podman

version: ">=1.16.0,<2.0.0"

- name: community.general

version: ">=10.0.0"

- name: ansible.posix

version: ">=1.6.0"

- name: community.crypto

version: ">=2.20.0"

Standard Galaxy requirements. Pinning the major helps; pinning the patch is overkill unless you’re chasing a specific bug.

requirements.txt

requests>=2.32

cryptography>=43

PyYAML>=6.0

The Python deps the collections actually use. containers.podman calls out to requests for some operations, community.crypto wants a recent cryptography, and PyYAML is so universally needed that listing it explicitly is a form of documentation.

bindep.txt

git [platform:rpm]

openssh-clients [platform:rpm]

sshpass [platform:rpm]

openssl [platform:rpm]

bindep (a small dependency specification format Ansible uses for system packages) is what ansible-builder consumes here, with platform tags so the same file works for apt, dnf, apk, etc. A homelab EE almost always wants git (for ansible.builtin.git), openssh-clients (for SSH against odd-keyed hosts), sshpass (for the rare case you can’t avoid password auth), and openssl (for community.crypto operations that shell out).

One more entry that bites people on minimal base images: if your playbooks talk to internal HTTPS endpoints (a Forgejo registry, an internal Vault, anything behind a private CA), add ca-certificates and, if you use a private CA, install the cert during additional_build_steps. The “why does requests fail with a TLS error inside the EE but not on my laptop” debugging session is one of the most common first EE experiences.

Building It

ansible-builder build \

--tag git.linuxserver.pro/chris/ansible-ee-homelab:2026-05-06 \

--container-runtime podman \

--verbosity 2

ansible-builder materialises a Containerfile in context/, then hands it to podman build. The --verbosity 2 flag echoes each step so you can see exactly what it’s doing; first-time builders should run it at least once to demystify the process.

The first build is slow (pulls the base image, runs Galaxy, pip, dnf). Subsequent builds are fast as long as requirements.yml, requirements.txt, and bindep.txt haven’t changed; the v3 stage layout means only the layers downstream of the change rebuild. In practice that’s the difference between a two-minute rebuild and a twenty-minute one.

After build, push to the registry:

podman push git.linuxserver.pro/chris/ansible-ee-homelab:2026-05-06

Now the image is the artifact. Anyone with credentials to the registry can run playbooks against the same versioned bundle of dependencies.

ansible-navigator: Running Plays Against the EE

ansible-navigator is the new front door. It looks superficially like ansible-playbook, but with a couple of important differences. The simplest invocation:

ansible-navigator run playbook.yml \

--execution-environment-image git.linuxserver.pro/chris/ansible-ee-homelab:2026-05-06 \

--pull-policy missing \

--mode stdout

--mode stdout produces output that looks exactly like ansible-playbook (which is what you want in CI and what most people want locally). Without it, navigator launches its TUI, which is genuinely useful for browsing task results across a multi-host run but a poor fit for terminals being scraped by a tool.

--pull-policy missing pulls the image if it’s not already present locally and otherwise reuses it. The other policies (always, never, tag) cover the obvious cases; missing is the right default for almost everyone.

In practice, you don’t pass these flags every time. ansible-navigator reads ansible-navigator.yml from the project directory:

---

ansible-navigator:

execution-environment:

image: git.linuxserver.pro/chris/ansible-ee-homelab:2026-05-06

pull:

policy: missing

container-engine: podman

container-options:

- "--net=host"

mode: stdout

playbook-artifact:

enable: false

A few notes on this config:

container-options: --net=hostis sometimes necessary when your inventory uses host-local DNS or you’re SSH’ing to addresses only resolvable on the host’s network namespace. It’s a sledgehammer; if you can avoid it (by feeding/etc/hostsentries or using FQDNs that resolve via public DNS), do.playbook-artifact: enable: false. By defaultansible-navigatorwrites a JSON artifact of every run into the project directory. Useful for the TUI’s “replay” feature, noisy in a Git repo. Turn it off unless you’re using replay deliberately.mode: stdoutas the project default means you don’t have to pass--mode stdoutevery time. The TUI is one--mode interactiveaway when you want it.- SSH agent forwarding. If you rely on

ssh-agentfor key access (the common case), the agent socket lives on the host and is invisible to the EE container by default. Pass it in viacontainer-options:-v $SSH_AUTH_SOCK:/ssh-agent, then-e SSH_AUTH_SOCK=/ssh-agent. The path inside the container is arbitrary; it just needs to match the environment variable. Without forwarding, the very first task that opens an SSH connection fails with no obviously-related error message.

With that file in place, the invocation collapses to:

ansible-navigator run -i inventory playbook.yml

And the rest of the command-line surface (--limit, --tags, --ask-vault-pass, --check, --diff) works exactly the same as ansible-playbook.

Other Navigator Subcommands Worth Knowing

ansible-navigator also wraps a handful of Ansible’s introspection tools, all of which run inside the EE:

ansible-navigator collectionslists the collections present in the image. This is the fastest way to verify your EE actually got built with what you asked for.ansible-navigator doc <module>runsansible-docinside the EE. Useful when the module behaviour you care about is the version your EE has, not the version your laptop’s outer Ansible has.ansible-navigator configdumps the effectiveansible.cfgas the EE sees it. Handy for “why is this variable not being picked up in the controller”.ansible-navigator inventory -i inventoryparses your inventory inside the EE and shows the merged result. Catches a fair number of “the dynamic inventory plugin needs a Python lib I forgot to add torequirements.txt” issues.

The pattern is consistent: anything you used to invoke as a top-level Ansible command, navigator runs for you inside the chosen image.

CI: Pinning the Image, Not the Tool

The CI story is where EEs really pay off. The Forgejo Actions custom OCI image walkthrough covered the general “build once, use in CI” pattern; for Ansible specifically, an EE is the version of that pattern that AAP also understands.

A typical Forgejo Actions step:

- name: Run Ansible playbook

uses: https://code.forgejo.org/actions/run@v1

with:

image: git.linuxserver.pro/chris/ansible-ee-homelab:2026-05-06

run: |

ansible-playbook -i inventory playbook.yml

Note that in CI you can call ansible-playbook directly inside the EE image, since the runner is already executing inside the container. Navigator adds no value here: you’re already “inside” the EE. The indirection it provides locally is exactly what your CI runner is already doing. Navigator’s role is local-developer ergonomics; in CI, the EE image is the runner, and the steps inside it are just plays.

The win is the pinned tag. The same 2026-05-06 image that the developer ran against locally is the one CI runs against, which is the one the controller in AAP runs against if you also use AAP. Three places, one artifact, no drift.

Updating an EE

Updates are a Git change and a rebuild. Bump a collection in requirements.yml, change the date in your image tag, run ansible-builder build, push, and point your ansible-navigator.yml and CI at the new tag in a pull request. Reviewers see the diff (which collections moved, which Python pins changed) and the build is reproducible because the previous tag still exists in the registry. Rolling back is git revert on the tag pointer.

The two patterns I rotate between:

- Date-tagged immutable images (

...:2026-05-06). Rebuild on a cadence (monthly, or when something I depend on cuts a release I want), tag with the date, never re-tag. Slightly more friction; significantly more auditability. - Channel tags (

...:stable,...:edge). Convenient for fast iteration, but you give up “the image you ran against last week is still pullable”. I use channel tags for a personal sandbox EE and date tags for anything reviewed.

For most people, start with date tags. Move to channel tags only if you have a clear reason to.

A subtle reproducibility point worth flagging: if your requirements.txt and requirements.yml aren’t tightly pinned, two rebuilds a week apart from the same Git tree can produce slightly different images. Galaxy and PyPI keep moving even if your repo doesn’t. For the homelab EE I’m fine with the loose pins above, because the date in the image tag captures “what was current that day”. For a regulated environment, pin to exact versions and treat the build as deterministic in the strict sense, not just “repeatable enough”.

Trade-offs and When It’s Overkill

The honest list of what an EE costs:

- Build infrastructure. You need somewhere to run

ansible-builder, somewhere to push the image, and credentials for both. For one person, this is a laptop and a free Quay account. For a team, it’s a CI job and an internal registry. - Container start latency. Every play pays a one-shot cost (a few hundred milliseconds) for the EE container to start. Imperceptible on a real playbook; annoying on a one-liner ad-hoc.

- Indirection. When something fails, you’re now debugging a play running inside a container running on a host.

ansible-navigator --mode stdoutkeeps the surface flat for the common case, but the occasional weird interaction (SELinux on a bind mount, an SSH agent socket not visible inside the EE) does cost some debugging time the first time it bites you. - Discipline. An EE only helps if you actually use it. Letting “I’ll just

pip installthis collection’s new dep on my laptop and not bump the EE” become a habit gives you the worst of both worlds: an EE that’s notionally pinned but in practice a moving target.

The fastest way to break EEs is bypassing them. Worth keeping front-of-mind, because the temptation to skip a rebuild for “just this one tiny thing” is exactly how the laptop-versus-CI-versus-AAP drift you bought the EE to avoid creeps back in.

When I’d skip an EE:

- Single-person scripting, two playbooks, one host. Just use a venv.

- Throwaway experiments where the friction of a rebuild outweighs the audit value.

- Teams that genuinely don’t have collection sprawl: a couple of

ansible.builtinmodules, nokubernetes, nocrypto, no third-party Galaxy content. The drift surface isn’t there to begin with.

When I’d insist on one:

- Anything reviewed by more than one person. The EE is the “this is what we agreed Ansible looks like” artifact.

- Anything running in CI and on a developer laptop. The image collapses the version surface.

- Anything heading toward AAP/AWX. You’ll need an EE there anyway; producing it yourself rather than inheriting a default is mostly free once the build pipeline exists.

- Anything touching

kubernetes.core,community.crypto,containers.podman, or any other collection with a non-trivial Python or system dependency. Those are the cases where “works on my laptop” most reliably bites. - Air-gapped or regulated environments with restricted outbound access. Pre-building the EE on a build host with internet access and shipping the image inward avoids runtime dependency installation entirely, which is often the only viable path through change-control review.

Summary

Execution Environments are the version of “containerize your toolchain” that the Ansible project already uses internally. The deal is straightforward: write a small declarative manifest, let ansible-builder produce a versioned image, and run plays against it with ansible-navigator locally and the same image in CI and on AAP. In return you get a single artifact whose version is the version of your automation, and the ability to talk about “the Ansible we use” as a thing that exists in a registry rather than as the loose union of whatever everyone’s laptop has installed today.

For a small fleet, the upfront investment is one afternoon. For anything bigger, it’s the cheapest way to stop debugging environment drift.

Common First-Time Pitfalls

A short list of the things that bite first-time EE users, gathered from teammates’ debugging sessions over a few of these rollouts:

- Missing Python deps cause module import errors. A collection’s docs list what it needs; if it isn’t in

requirements.txt, the play fails with a PythonImportErrorthat doesn’t always say which collection asked for the library. - Missing system packages cause runtime failures. A

community.cryptotask that callsopenssl, a Kerberos task that wantskinit. The error often surfaces as a generic non-zero exit code from the underlying binary. Add tobindep.txtand rebuild. - SSH agent socket not visible inside the EE. Pass it in via

container-options(-v $SSH_AUTH_SOCK:/ssh-agent,-e SSH_AUTH_SOCK=/ssh-agent) or fall back to a key-file mount. Without one of those two, the first SSH connection fails with a confusing “permission denied”. - DNS or routing surprises. Hosts addressable from your laptop but not from the EE’s container network. The pragmatic fix is

--net=hostincontainer-options; the cleaner fix is to make the inventory addressable independently of host-local resolution. - CA certificates missing on minimal base images. Anything pulling from an internal HTTPS endpoint fails at TLS validation. Add

ca-certificatestobindep.txtand, for a private CA, drop the cert into place duringadditional_build_steps. - File ownership surprises on bind mounts. Files created by the EE container may be owned by a different UID/GID than your host user, depending on the container runtime configuration (rootless Podman with userns mapping is the usual culprit). Usually harmless, occasionally confusing when cleaning up artifacts or trying to read a generated file from your editor.

Each of these is a one-line fix once you know which line. The first time, they’re an evening of “but it works on my laptop”.

References

- Ansible Builder documentation - the canonical guide for

execution-environment.yml - Ansible Navigator documentation - configuration reference and subcommand walkthroughs

- Ansible Runner documentation - the inner process supervisor that EEs ship

- Community Execution Environment images on Quay -

community-ee-minimalandcommunity-ee-baseas starting points - Red Hat: What’s in an Execution Environment - Red Hat’s own primer

- Ansible-native Quadlets: Mastogreet - the homelab Ansible role this EE is sized for

- Forgejo CI with a Custom OCI Image - the same pinned-image pattern for CI runners more broadly

- CentOS Stream as a base - reasoning for why

centos:stream9is a sensible EE base

Comments

You can use your Mastodon or other ActivityPub account to comment on this article by replying to the associated post.

Search for the copied link on your Mastodon instance to reply.

Loading comments...