- Sat 21 March 2026

- Networking

- #freebsd, #bgp, #networking, #ipv6, #frr, #pf, #ixp, #locix, #ibgp

Part 1 covered running a single BGP router with two upstream providers. Part 2 added a second Point of Presence with Vultr native peering and iBGP. This is Part 3: connecting to an Internet Exchange Point.

An IXP changes the game. Instead of sending traffic through transit providers and their upstreams, networks at an exchange peer directly over a shared switching fabric. The result is shorter paths, lower latency, and fewer intermediaries.

This article documents connecting my AS201379 to LocIX Düsseldorf - a community-run IXP with over 350 participants - using a dedicated FreeBSD edge router, and the iBGP plumbing that ties it back into the existing infrastructure. This article assumes familiarity with BGP, iBGP, and basic FreeBSD networking as covered in the previous parts.

Note on addresses: All provider-assigned and transit-provider IP addresses have been replaced with RFC 5737 / RFC 3849 documentation ranges. AS201379, its prefix 2a06:9801:1c::/48, and all IXP-facing addresses (which are publicly visible in peering databases) are shown as-is. LocIX route server addresses and the peering LAN prefix are equally public.

Why Join an IXP?

Transit providers create indirect paths: even if two networks are geographically close, their traffic may traverse multiple intermediary ASes. At an IXP, those intermediaries disappear - networks exchange traffic directly over a shared fabric. The practical impact is measurable:

One example is traffic between my AS201379 and meerfarbig.net:

Without IXP (via transit):

hobgp → iFog → DE-CIX fabric → meerfarbig.net

3+ hops, 20-30ms

With IXP (direct peering):

hobgp → lobgp → LocIX fabric → meerfarbig.net

2 hops, ~9ms

Beyond latency, IXP peering provides route diversity that transit alone cannot match. Route servers at the exchange announce routes from every participant. A single BGP session to the route server gives me access to routes from hundreds of networks - routes I won’t see through transit because those networks either peer-lock certain prefixes or prefer to exchange locally.

Architecture: The Three-Router Triangle

My third router (lobgp) connects to LocIX through a hosting provider with direct layer-2 access to the exchange fabric. It peers with LocIX’s route servers over the peering LAN, announces my /48, and sends everything it learns back to hobgp via iBGP over a GIF tunnel. The resulting topology looks like this:

┌──────────────────────────────────────────────────┐

│ Default-Free Zone │

└──┬─────────────┬──────────────┬──────────────────┘

│ │ │

AS209533 AS209735 AS212895

(iFog) (Lagrange) (route64)

│ │ │

GRE tunnel GRE tunnel GRE tunnel

│ │ │

┌────┴─────────────┴──────────────┴────────────┐

│ hobgp (Core) │

│ FreeBSD + FRR, AS201379 │

│ 2a06:9801:1c::/48 │

└────────────┬────────────────┬────────────────┘

│ │

GIF tunnel GIF tunnel

(iBGP) (iBGP)

│ │

┌────────────────────────────┴──┐ ┌─────────┴─────────────────────┐

│ vtbgp (Edge) │ │ lobgp (Edge) │

│ FreeBSD + FRR, AS201379 │ │ FreeBSD + FRR, AS201379 │

│ Native BGP → AS64515 (Vultr) │ │ LocIX Peering LAN │

└───────────────────────────────┘ │ → RS1/RS2 (AS202409) │

│ → Transit AS34872 (Servperso)│

└───────────────────────────────┘

│

LocIX Düsseldorf

┌──────────────┴──────────────┐

│ 350+ participants │

│ Direct L2 peering fabric │

└─────────────────────────────┘

The design maintains the hub-and-spoke model from Part 2: hobgp remains the forwarding hub. Traffic arriving at lobgp from the IXP is forwarded through the iBGP tunnel to hobgp, which handles routing to downstream servers. lobgp announces my prefix to the exchange and passes learned routes to hobgp for path selection.

Getting IXP Access

LocIX is a community-run exchange, which makes it accessible to smaller networks. The connection itself requires a VM with a network interface on the LocIX peering LAN - a layer-2 VLAN that reaches the exchange fabric.

Several hosting providers offer VMs with LocIX connectivity. I use Servperso, which provides VMs in Düsseldorf with a dedicated interface connected to the LocIX peering VLAN. The VM gets two network interfaces: vtnet1 for regular internet connectivity (transit from Servperso’s own AS34872), and vtnet0 directly on the LocIX peering LAN.

After signing up with LocIX and receiving the peering LAN assignment, the setup is straightforward: configuring the peering LAN addresses, peering with the route servers, and announcing my prefix.

The IXP Edge Router: lobgp

Network Configuration

The /etc/rc.conf configures two physical interfaces and a GIF tunnel back to the core:

hostname="lobgp"

kern_securelevel_enable="YES"

kern_securelevel="2"

kld_list="if_gif"

# Internet connectivity (Servperso)

ifconfig_vtnet1="inet 198.51.100.50 netmask 255.255.255.224 -lro -tso"

defaultrouter="198.51.100.62"

ifconfig_vtnet1_ipv6="inet6 2001:db8:b640::50/112 -rxcsum6 -tso6"

ipv6_defaultrouter="2001:db8:b640::ffff"

# LocIX Peering LAN (direct L2)

ifconfig_vtnet0="inet 185.1.155.23/24 -lro -tso"

ifconfig_vtnet0_ipv6="inet6 2a0c:b641:701::a5:20:1379:1 prefixlen 64 -rxcsum6 -tso6"

# GIF tunnel to core (hobgp) - runs over IPv6

cloned_interfaces="gif0"

ifconfig_gif0="inet6 tunnel 2001:db8:b640::50 2001:db8:1c19::1 mtu 1460"

ifconfig_gif0_ipv6="inet6 2a06:9801:1c:fffd::2 2a06:9801:1c:fffd::1 prefixlen 128"

ifconfig_gif0_descr="Uplink-to-hobgp"

# Routing

ipv6_gateway_enable="YES"

ipv6_static_routes="myblock"

ipv6_route_myblock="2a06:9801:1c::/48 -interface gif0"

# Services

pf_enable="YES"

frr_enable="YES"

sshd_enable="YES"

Several things are different from vtbgp in Part 2:

Two physical interfaces instead of one. vtnet1 provides regular internet connectivity; vtnet0 connects directly to the LocIX peering LAN. This separation is important - peering LAN traffic should never traverse the internet-facing interface.

The GIF tunnel runs over IPv6, not IPv4. Both Servperso and Hetzner provide native IPv6, so the encapsulation uses inet6 tunnel to wrap IPv6-in-IPv6. The tunnel MTU is set to 1460 - the standard 1500-byte Ethernet MTU minus the 40-byte outer IPv6 header added by GIF. To avoid fragmentation, PF clamps TCP MSS to 1400 bytes on $hobgp_tun, ensuring segments fit cleanly inside the tunnel after the inner IPv6 header (40 bytes) and TCP header (20 bytes) are accounted for.

The peering LAN addresses are publicly registered. 185.1.155.23 (IPv4) and 2a0c:b641:701::a5:20:1379:1 (IPv6) are assigned by LocIX and visible in the PeeringDB entry. These aren’t documentation-range replacements - they’re the real addresses, because hiding them would be counterproductive for anyone wanting to peer.

Like vtbgp, the static route ipv6_route_myblock points the entire /48 at gif0, so any traffic arriving for my prefix gets forwarded through the tunnel to my core router hobgp.

FRR Configuration

The FRR config has four BGP sessions: two to LocIX route servers, one to Servperso for transit, and one iBGP back to hobgp:

frr version 10.5.1

frr defaults traditional

hostname lobgp

log syslog informational

service integrated-vtysh-config

!

ipv6 prefix-list PL-MY-NET seq 5 permit 2a06:9801:1c::/48

!

! [PL-BOGONS: denies reserved/invalid prefixes, permits ::/0 le 48 - see Part 1]

!

route-map RM-IXP-IN permit 10

match ipv6 address prefix-list PL-BOGONS

set local-preference 200

exit

!

route-map RM-IXP-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

exit

!

route-map RM-SERVPERSO-IN permit 10

match ipv6 address prefix-list PL-BOGONS

set local-preference 100

exit

!

route-map RM-SERVPERSO-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

exit

!

route-map RM-IBGP-OUT permit 10

exit

!

router bgp 201379

bgp router-id 185.1.155.23

no bgp enforce-first-as

no bgp default ipv4-unicast

neighbor 2a06:9801:1c:fffd::1 remote-as 201379

neighbor 2a06:9801:1c:fffd::1 description Core-hobgp

neighbor 2a06:9801:1c:fffd::1 update-source 2a06:9801:1c:fffd::2

neighbor 2a0c:b641:701:0:a5:20:2409:1 remote-as 202409

neighbor 2a0c:b641:701:0:a5:20:2409:1 description LocIX-RS1-v6

neighbor 2a0c:b641:701:0:a5:20:2409:2 remote-as 202409

neighbor 2a0c:b641:701:0:a5:20:2409:2 description LocIX-RS2-v6

neighbor 2001:db8:b640::ffff remote-as 34872

neighbor 2001:db8:b640::ffff description Transit-Servperso-v6

!

address-family ipv6 unicast

neighbor 2a06:9801:1c:fffd::1 activate

neighbor 2a06:9801:1c:fffd::1 next-hop-self

neighbor 2a06:9801:1c:fffd::1 route-map RM-IBGP-OUT out

neighbor 2a0c:b641:701:0:a5:20:2409:1 activate

neighbor 2a0c:b641:701:0:a5:20:2409:1 soft-reconfiguration inbound

neighbor 2a0c:b641:701:0:a5:20:2409:1 route-map RM-IXP-IN in

neighbor 2a0c:b641:701:0:a5:20:2409:1 route-map RM-IXP-OUT out

neighbor 2a0c:b641:701:0:a5:20:2409:2 activate

neighbor 2a0c:b641:701:0:a5:20:2409:2 soft-reconfiguration inbound

neighbor 2a0c:b641:701:0:a5:20:2409:2 route-map RM-IXP-IN in

neighbor 2a0c:b641:701:0:a5:20:2409:2 route-map RM-IXP-OUT out

neighbor 2001:db8:b640::ffff activate

neighbor 2001:db8:b640::ffff soft-reconfiguration inbound

neighbor 2001:db8:b640::ffff route-map RM-SERVPERSO-IN in

neighbor 2001:db8:b640::ffff route-map RM-SERVPERSO-OUT out

exit-address-family

exit

Route Servers: One Session, Hundreds of Routes

IXP route servers are the key simplification. Instead of establishing individual BGP sessions with every network at the exchange (which could mean hundreds of sessions), you peer with the exchange’s route servers. LocIX runs two route servers (AS202409) for redundancy. Each route server collects routes from all participants and re-announces them to everyone. You can think of the route server as a fan-out mechanism: one BGP session in, hundreds of routes out.

The two route server sessions deliver approximately 1,800 unique prefixes each - routes from the ~350 networks present at LocIX. These are routes you typically wouldn’t see through transit, or would see with a longer AS-path. At the exchange, they’re direct: one hop across the peering fabric.

no bgp enforce-first-as: Route Server Compatibility

Route servers are transparent BGP speakers. They don’t insert their own AS into the AS-path of re-announced routes. When RS1 (AS202409) sends you a route originally announced by, say, AS13335 (Cloudflare), the AS-path still starts with 13335 - not 202409 13335.

Standard BGP behavior rejects routes where the first AS in the path doesn’t match the neighbor’s configured remote-AS. Since the route server’s AS (202409) isn’t in the path, FRR would reject every route the server sends. no bgp enforce-first-as disables this check, which is required for route server peering.

This is safe in this context: route servers at established IXPs perform their own RPKI and IRR validation. In practice, you’re trusting their filtering - just as you trust a transit provider’s ingress filtering - only applied at a different point in the path.

Local Preference: IXP Routes Win

The route-map RM-IXP-IN sets local-preference 200 on routes learned from the route servers. On hobgp, the iBGP-learned routes from lobgp carry this LP value. The preference hierarchy across the entire network becomes:

| Source | Local Preference | Path |

|---|---|---|

| LocIX route servers (via lobgp) | 200 | IXP direct peering |

| Vultr (via vtbgp) | 200 | Vultr native net |

| iFog BGPTunnel | 150 | GRE transit |

| Lagrange / route64 / Servperso | 100 | GRE transit (backup) |

LocIX and Vultr share LP 200 intentionally. When a destination is reachable via both (LP tied), BGP’s tiebreakers apply. The most important one here is AS-path length: a route learned directly from a peer at LocIX carries that peer’s AS-path as-is, while the same route via Vultr traverses Vultr’s upstream chain, adding AS hops. Rather than artificially preferring one over the other, the tie lets BGP’s path-length comparison pick the objectively shorter route.

The Servperso transit (RM-SERVPERSO-IN, LP 100) serves as a fallback. If the tunnel to hobgp fails or hobgp is down, lobgp still has full internet connectivity through Servperso and can continue forwarding traffic for our /48.

next-hop-self on the iBGP Session

Same principle as vtbgp in Part 2. Routes learned from the LocIX route servers have next-hops on the peering LAN (e.g., 2a0c:b641:701::a5:20:13335:1 for Cloudflare). hobgp can’t reach those addresses - they’re on a VLAN in Düsseldorf that hobgp has no path to. next-hop-self rewrites all next-hops to 2a06:9801:1c:fffd::2 (lobgp’s tunnel address), making the routes actionable for hobgp: “send traffic down the iBGP tunnel to lobgp, which will forward it across the peering LAN.”

PF: Protecting the IXP Edge

The peering LAN is a shared medium. Dozens of networks on the same layer-2 segment means your edge router’s interfaces are exposed to ARP/NDP from every participant. The firewall must protect both the router itself and the peering infrastructure:

ext_if = "vtnet1"

ixp_if = "vtnet0"

hobgp_tun = "gif0"

my_network_v6 = "2a06:9801:1c::/48"

my_router_ip = "2a06:9801:1c:fffd::2"

gw_hobgp_v6 = "2001:db8:1c19::1"

rs1_v6 = "2a0c:b641:701::a5:20:2409:1"

rs2_v6 = "2a0c:b641:701::a5:20:2409:2"

peer_servperso = "2001:db8:b640::ffff"

table <bgp_peers> const { $rs1_v6, $rs2_v6, $peer_servperso }

set skip on lo0

set block-policy drop

scrub in all fragment reassemble

scrub on $hobgp_tun max-mss 1400

block log all

block in quick on $ext_if from { <bogons>, $my_network_v6 } to any

antispoof quick for { $ext_if }

# --- Control Plane ---

# ICMPv6 on external and IXP interfaces

pass in quick on { $ext_if, $ixp_if } inet6 proto ipv6-icmp \

icmp6-type { neighbrsol, neighbradv, echoreq, 2 } keep state

pass out quick on { $ext_if, $ixp_if } inet6 proto ipv6-icmp keep state

# Outer tunnel: IPv6-in-IPv6 encapsulation, stateless

pass in quick on $ext_if proto ipv6 from $gw_hobgp_v6 to ($ext_if) no state

pass out quick on $ext_if proto ipv6 from ($ext_if) to $gw_hobgp_v6 no state

# iBGP session over tunnel

pass in quick on $hobgp_tun proto tcp from 2a06:9801:1c:fffd::1 to any port 179 keep state

pass out quick on $hobgp_tun proto tcp from any to 2a06:9801:1c:fffd::1 port 179 keep state

# eBGP sessions on peering LAN (route servers + Servperso transit)

pass in quick on $ixp_if proto tcp from <bgp_peers> to ($ixp_if) port 179 keep state

pass out quick on $ixp_if proto tcp from ($ixp_if) to <bgp_peers> port 179 keep state

# ARP/ICMP on peering LAN for neighbor discovery

pass in quick on $ixp_if inet proto icmp keep state

pass out quick on $ixp_if inet proto icmp keep state

# --- Data Plane ---

# Router's own outbound

pass out quick inet6 from $my_router_ip to any keep state

# Transit: stateless for asymmetric routing

pass in quick inet6 from any to $my_network_v6 no state

pass out quick inet6 from any to $my_network_v6 no state

pass in quick inet6 from $my_network_v6 to any no state

pass out quick inet6 from $my_network_v6 to any no state

# Default outbound fallback

pass out quick all keep state

Compared to vtbgp, the IXP edge firewall adds a few important constraints:

BGP is locked to the route server addresses. On the peering LAN, only the LocIX route servers and the Servperso transit peer are allowed to establish BGP sessions. Random participants can’t initiate unsolicited BGP sessions to our router.

ICMPv6 is permitted on the peering LAN. NDP (Neighbor Discovery Protocol) must work for the router to resolve MAC addresses of peers on the shared segment. Without this, the peering LAN interface is dead.

IPv4 ICMP is explicitly allowed on the peering LAN. Even though this is primarily an IPv6 setup, the peering LAN carries IPv4 ARP and ICMP for dual-stack participants. Allowing ICMP keeps diagnostics working.

Core Router Changes: Adding the Second iBGP Peer

On hobgp, adding lobgp means a new GIF tunnel and a new iBGP session. The additions to rc.conf:

# GIF tunnel to lobgp (LocIX via Servperso) - IPv6-in-IPv6

ifconfig_gif5="inet6 tunnel 2001:db8:1c19::1 2001:db8:b640::50 mtu 1460"

ifconfig_gif5_ipv6="inet6 2a06:9801:1c:fffd::1 2a06:9801:1c:fffd::2 prefixlen 128"

ifconfig_gif5_descr="Tunnel-to-lobgp"

And the FRR additions:

neighbor 2a06:9801:1c:fffd::2 remote-as 201379

neighbor 2a06:9801:1c:fffd::2 description Edge-Servperso-IXP

neighbor 2a06:9801:1c:fffd::2 update-source 2a06:9801:1c:fffd::1

!

address-family ipv6 unicast

neighbor 2a06:9801:1c:fffd::2 activate

neighbor 2a06:9801:1c:fffd::2 soft-reconfiguration inbound

neighbor 2a06:9801:1c:fffd::2 route-map RM-IBGP-IN in

neighbor 2a06:9801:1c:fffd::2 route-map RM-IBGP-OUT out

The RM-IBGP-IN and RM-IBGP-OUT route-maps are shared with the vtbgp iBGP session - no new policy needed. The core router’s firewall also needs the tunnel endpoint added to its permitted peers, and the gif5 tunnel added to MSS clamping rules.

With this, hobgp now has five BGP sessions: three eBGP upstreams (iFog, Lagrange, route64) and two iBGP peers (vtbgp for Vultr, lobgp for LocIX).

Verification

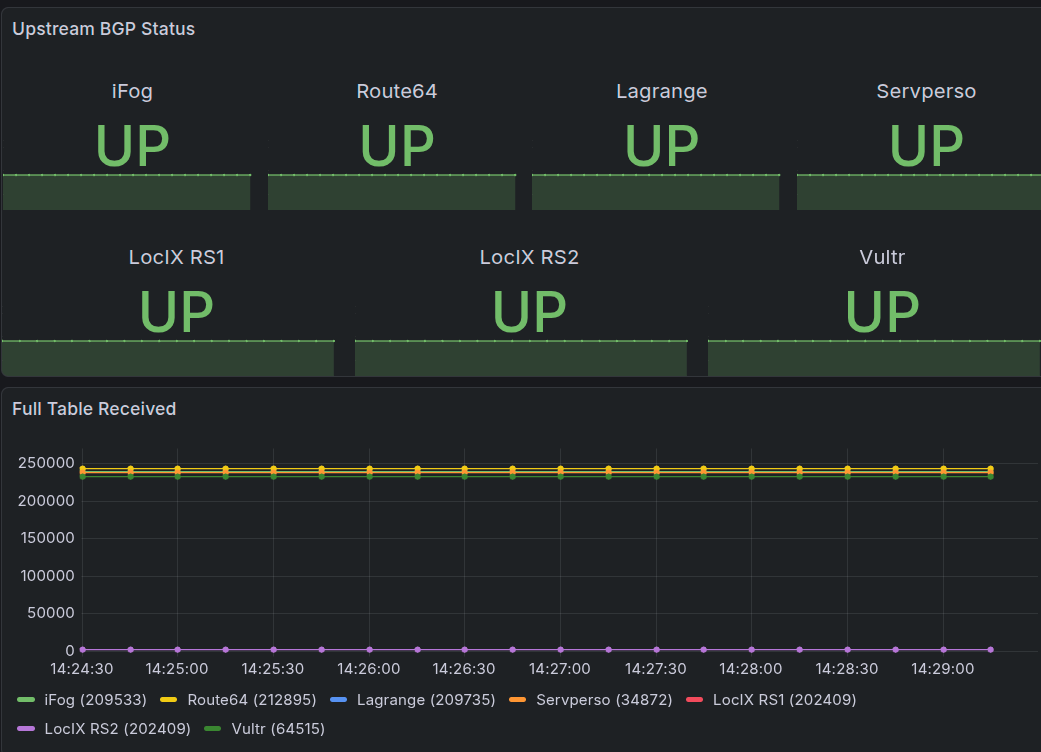

BGP Sessions

First, confirm that all BGP sessions are established. On lobgp, vtysh -c 'show bgp ipv6 summary' shows:

Neighbor V AS MsgRcvd MsgSent Up/Down PfxRcd Desc

2a06:9801:1c:fffd::1 4 201379 4034 1792956 2d19h10m 1 Core-hobgp

2001:db8:b640::ffff 4 34872 2512958 4034 2d19h10m 238115 Transit-Servperso

2a0c:b641:701:0:a5:20:2409:1 4 202409 123879 4035 2d19h10m 1859 LocIX-RS1-v6

2a0c:b641:701:0:a5:20:2409:2 4 202409 123882 4035 2d19h10m 1859 LocIX-RS2-v6

The route servers each contribute ~1,859 prefixes - the routes announced by other LocIX participants. Servperso provides a full DFZ view (~238K prefixes) as transit backup. The iBGP session to hobgp sends exactly 1 prefix outbound: our /48.

On hobgp, the five-peer summary shows the combined view:

Neighbor V AS MsgRcvd MsgSent Up/Down PfxRcd Desc

2001:db8:300::1 4 209533 5096936 33805 10:02:36 239576 Upstream-iFog-FRA

fd00:ca::1 4 209735 4777022 5636 3d21h52m 237342 Upstream-Lagrange-UK

2001:db8:400::1 4 212895 1907099 5636 3d21h52m 243233 Upstream-Route64-FRA

2a06:9801:1c:fffd::2 4 201379 2762876 5622 2d19h09m 238120 Edge-Servperso-IXP

2a06:9801:1c:fffe::2 4 201379 2396813 5640 3d21h48m 233048 Edge-Vultr-Frankfurt

The vtbgp peer (2a06:9801:1c:fffe::2) is the Vultr edge router from Part 2. The new lobgp iBGP session (2a06:9801:1c:fffd::2) delivers ~238K prefixes to hobgp - the combination of LocIX routes and Servperso’s full table, merged and forwarded via iBGP. The IXP routes carry LP 200 and win path selection for destinations that are reachable via the exchange.

The IXP Difference: Real Traceroutes

The real test is measuring what the exchange connection actually changes. Here’s a traceroute to meerfarbig, a German ISP that peers with my AS201379 at LocIX:

HOST: hobgp Loss% Snt Last Avg Best Wrst StDev

1.|-- lobgp.edge.hofstede.it 0.0% 10 8.3 8.8 8.2 11.4 1.0

2.|-- ae0.800.mx240.dus1.meerfarbig.net 0.0% 10 9.5 14.4 9.4 29.2 6.2

3.|-- 2a00:f820:11::45 0.0% 10 9.4 9.6 9.4 10.0 0.2

Three hops total. hobgp sends to lobgp through the iBGP tunnel (hop 1). lobgp forwards across the LocIX peering fabric to meerfarbig’s edge router ae0.800.mx240.dus1 in Düsseldorf (hop 2) - this is where the ICMP TTL-exceeded reply comes from, confirming the packet entered meerfarbig’s network directly from the exchange. Hop 3 (2a00:f820:11::45) is the final destination, one hop deeper inside meerfarbig’s infrastructure. Total latency: under 10ms. Without the IXP, this traffic would traverse iFog or route64, adding hops through transit infrastructure and roughly doubling the latency.

This is direct peering in practice. No transit AS in the path. The packet crosses one switching fabric and enters the peer’s network at their edge router - from there, it’s their internal routing to the destination.

Peering Policy

With IXP access comes a peering policy. My AS201379 operates an open peering policy - I’m happy to peer with anyone at LocIX or any other exchange point, with no traffic requirements or filtering restrictions beyond basic hygiene (valid RPKI, prefix limits).

The full peering policy, contact details, and current peering information are published at hofstede.it/as201379.html.

What’s Next: IPv4

The entire setup so far is IPv6-only. Every tunnel, every BGP session, every announced prefix is IPv6. This was deliberate: IPv6 address space is cheap and easy to obtain, making it realistic for a hobby AS.

The next step is adding IPv4. A sponsoring LIR will provide me a small IPv4 prefix, which opens up the setup to the ~40% of internet traffic that still runs on IPv4. Adding IPv4 means dual-stack on the existing routers, IPv4 tunnel overlays in parallel with the IPv6 ones, and PF rules for both address families. The three-router topology stays the same; it just gains a second address family.

Lessons Learned

Route servers are the IXP’s killer feature for small networks. Without them, joining an IXP would mean manually negotiating BGP sessions with individual participants - operationally intractable for a hobby network. Two BGP sessions give you access to every participant’s routes - the exchange handles policy enforcement and validation.

no bgp enforce-first-as is required for route server peering. This is a common gotcha. Route servers are transparent - they don’t insert their own AS into the path. Without disabling the first-AS check, FRR rejects every route the server sends.

The peering LAN is a hostile environment. Dozens of networks on the same L2 segment means your router is directly exposed. Lock BGP to known peers, allow only essential ICMPv6/NDP, and drop everything else. The peering LAN is not a trusted network.

IXP routes and transit routes complement each other. The ~1,800 prefixes from LocIX’s route servers are a subset of the ~240K full table. For those 1,800 destinations, the IXP path is dramatically shorter and faster. For everything else, transit providers fill in. Setting LP 200 on IXP routes ensures they always win when available.

IPv6-in-IPv6 tunnels work well for iBGP links. When both endpoints have native IPv6, there’s no reason to wrap IPv6 traffic in IPv4. The GIF tunnel between lobgp and hobgp uses inet6 tunnel and avoids the IPv4 dependency entirely.

Conclusion

Adding an IXP connection completes the final piece of a small but functional internet infrastructure: transit provides global reachability, native Vultr peering provides reach into Vultr-connected networks, and the IXP provides direct paths to every other participant at the exchange. Three routers, three distinct connectivity roles - each solving a problem the others can’t.

The three-router topology is surprisingly manageable. Each router has a clear role: hobgp is the forwarding hub with upstream transit, vtbgp announces into Vultr’s network, and lobgp peers at the exchange. iBGP ties them together, and local-preference ensures traffic takes the best available path automatically.

The practical impact is measurable in every traceroute. Destinations reachable via LocIX are one hop away instead of three or four. That’s not a theoretical improvement - it’s 10ms instead of 25ms, visible in every connection.

For anyone running a hobby AS and wondering whether IXP connectivity is worth the extra router: it is. The route diversity alone justifies the cost, and watching your traffic take a direct two-hop path to a network that used to be five hops away through transit is exactly the kind of thing that makes this hobby worth pursuing.

References

- LocIX - Community Internet Exchange

- PeeringDB - LocIX Düsseldorf

- Servperso - Düsseldorf Hosting with IXP Access

- AS201379 Peering Policy

- FRR Documentation: Route Servers and enforce-first-as

- bgp.tools - BGP Looking Glass

- Part 1: Running Your Own AS

- Part 2: Going Multi-Homed

Three articles, three evolutionary steps, and the same fundamental toolset: FreeBSD, FRR, PF, and tunnels. The internet doesn’t care that AS201379 runs on three small virtual machines. It sees valid routes, clean filters, and responsive peering. From a single router with one upstream to a three-PoP network with direct exchange peering - the architecture scales because the protocols do.

Comments

You can use your Mastodon or other ActivityPub account to comment on this article by replying to the associated post.

Search for the copied link on your Mastodon instance to reply.

Loading comments...