- Thu 26 February 2026

- 26 min read

- Networking

- #freebsd, #bgp, #networking, #ipv6, #frr, #pf, #ibgp, #multihoming

The previous article covered the basics: obtain an AS number and IPv6 prefix, build a single FreeBSD BGP router with two upstream providers, and tunnel the prefix to downstream servers. That setup works. But it has a property that feels wrong once you’ve operated it for a while: all your traffic converges on one point. One machine, one location, two upstreams. Everything in, everything out, through the same box.

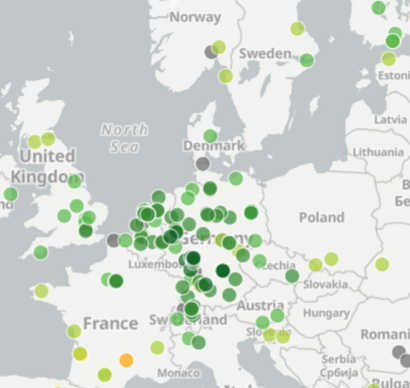

Multi-homing addresses this at multiple levels. More upstream providers give inbound path diversity. A second Point of Presence at a different network means traffic from different parts of the internet can enter through different doors, and you can engineer which traffic uses which path. This article documents the evolution from that single-router setup to a distributed network: two FreeBSD BGP routers connected by iBGP and three upstream GRE-based transit providers.

The routing concepts involved - iBGP, local-preference, next-hop-self, stateless transit filtering - are the same ones any real network operator deals with. The hardware is two virtual machines.

Note on addresses: All provider-assigned IP addresses, tunnel endpoints, and management IPs have been replaced with RFC 5737 / RFC 3849 documentation ranges. AS201379 and the prefix 2a06:9801:1c::/48 are real public BGP resources and shown as-is. Upstream AS numbers (AS209533, AS209735, AS212895, AS64515) are equally visible in public routing tables.

Why Add More?

The original two-upstream setup had one failure domain and one point of path selection. Three practical problems motivated the expansion:

Route diversity. Different parts of the internet have different peering arrangements. A network that transits heavily through Vultr’s infrastructure prefers paths that show up in Vultr’s BGP. A network at DE-CIX Frankfurt prefers the shorter path through a Frankfurt exchange point. With one upstream set, you can only be optimally reachable for the networks whose traffic happens to prefer your specific providers.

Single point of failure. One router means one failure domain. A reboot or misconfiguration takes down all reachability simultaneously.

Traffic engineering granularity. AS-path prepending lets you make one path look less preferred, but only when the receiving network actually has a choice of paths. Multiple announcement points give that choice.

Architecture

The setup has two tiers: a core router (hobgp) at a Hetzner facility in Nuremberg that handles three GRE upstreams and an edge router (vtbgp) on Vultr in Frankfurt that announces our prefix natively into Vultr’s network. The two routers are connected by a GIF tunnel running iBGP.

┌──────────────────────────────────────────────────┐

│ Default-Free Zone │

└──┬─────────────┬──────────────┬──────────────────┘

│ │ │

AS209533 AS209735 AS212895

(iFog) (Lagrange) (route64)

│ │ │

GRE tunnel GRE tunnel GRE tunnel

│ │ │

┌────┴─────────────┴──────────────┴────────────┐

│ hobgp (Core) │

│ FreeBSD + FRR, AS201379 │

│ 2a06:9801:1c::/48 │

└──────────────────────┬────────────────────────┘

│

GIF tunnel (iBGP)

│

┌──────────────────────┴────────────────────────┐

│ vtbgp (Edge) │

│ FreeBSD + FRR, AS201379 │

│ Native BGP → AS64515 (Vultr) │

└───────────────────────────────────────────────┘

│

AS64515

(Vultr)

│

┌──────────┴──────────┐

│ Default-Free Zone │

└───────────────────────┘

hobgp also tunnels downstream (as in the previous article):

┌────────────┐

│ radon │

│ :1000::/64│

└────────────┘

The key routing properties of this design: hobgp is the actual forwarding hub - all traffic to and from downstream servers flows through it. vtbgp is an announcement-only node; traffic that arrives there is immediately sent through the iBGP tunnel to hobgp for forwarding. This asymmetry is intentional and efficient: Vultr’s infrastructure sees our prefix announced locally, which shortens the path for Vultr-connected networks, without requiring vtbgp to carry the full routing table or forward arbitrary internet traffic.

The Core Router: hobgp

Network Configuration

The rc.conf now manages three GRE upstreams and a GIF tunnel to the edge router, in addition to the downstream server tunnels from the original setup:

hostname="hobgp"

kern_securelevel_enable="YES"

kern_securelevel="2"

kld_list="if_gif if_gre"

# Physical interface (Hetzner)

ifconfig_vtnet0="inet 198.51.100.10/32 -lro -tso"

ifconfig_vtnet0_ipv6="inet6 2001:db8:1c19::1/64"

defaultrouter="198.51.100.1"

ipv6_defaultrouter="fe80::1%vtnet0"

# Loopback: router's address within our /48

ifconfig_lo0_alias0="inet6 2a06:9801:1c::1 prefixlen 64"

# Tunnel interfaces

cloned_interfaces="gif0 gif1 gre0 gre1 gre2"

# GRE to iFog (BGPTunnel.com free transit)

ifconfig_gre0="tunnel 198.51.100.10 198.51.100.44"

ifconfig_gre0_ipv6="inet6 2001:db8:300::2 prefixlen 126"

ifconfig_gre0_descr="Transit-iFog-Frankfurt BGPTunnel"

# GRE to Lagrange (UK transit, backup path)

ifconfig_gre1="tunnel 198.51.100.10 198.51.100.45"

ifconfig_gre1_ipv6="inet6 fd00:ca::2 prefixlen 64"

ifconfig_gre1_descr="Transit-Lagrange-UK"

# GRE to route64.org (Frankfurt transit)

ifconfig_gre2="tunnel 198.51.100.10 198.51.100.46"

ifconfig_gre2_ipv6="inet6 2001:db8:400::2 prefixlen 64"

ifconfig_gre2_descr="Transit-route64-Frankfurt"

# GIF to downstream VPS (radon)

ifconfig_gif0="tunnel 198.51.100.10 203.0.113.10 mtu 1480"

ifconfig_gif0_ipv6="inet6 2a06:9801:1c:ffff::1 2a06:9801:1c:ffff::2 prefixlen 128"

ifconfig_gif0_descr="Tunnel-to-Radon-Server"

# GIF to Vultr edge router (vtbgp) - iBGP link

ifconfig_gif1="tunnel 198.51.100.10 203.0.113.20 mtu 1480"

ifconfig_gif1_ipv6="inet6 2a06:9801:1c:fffe::1 2a06:9801:1c:fffe::2 prefixlen 128"

ifconfig_gif1_descr="Tunnel-to-Vultr-Edge"

# Routing

ipv6_static_routes="myblock cloud vtbgp"

ipv6_route_myblock="2a06:9801:1c::/48 -reject"

ipv6_route_cloud="2a06:9801:1c:1000::/64 2a06:9801:1c:ffff::2"

ipv6_route_vtbgp="2a06:9801:1c:fffe::2/128 -interface gif1"

ipv6_gateway_enable="YES"

The iBGP link (gif1) uses addresses from our own /48 (2a06:9801:1c:fffe::/64). This is convenient - those addresses are already routable within our infrastructure, so the iBGP session doesn’t depend on any provider-assigned addresses being reachable.

FRR Configuration

The FRR configuration grows to four BGP sessions. The substantive additions over the original are local-preference assignment on inbound route-maps, and the iBGP session to vtbgp:

frr version 10.5.1

frr defaults traditional

hostname hobgp

log syslog informational

service integrated-vtysh-config

!

ipv6 prefix-list PL-MY-NET seq 5 permit 2a06:9801:1c::/48

!

! [PL-BOGONS: same comprehensive bogon filter as original - see previous article]

!

route-map RM-IFOG-BGPTUNNEL-IN permit 10

match ipv6 address prefix-list PL-BOGONS

set local-preference 150

exit

!

route-map RM-IFOG-BGPTUNNEL-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

exit

!

route-map RM-LAGRANGE-IN permit 10

match ipv6 address prefix-list PL-BOGONS

exit

!

route-map RM-LAGRANGE-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

set as-path prepend 201379 201379

exit

!

route-map RM-ROUTE64-IN permit 10

match ipv6 address prefix-list PL-BOGONS

exit

!

route-map RM-ROUTE64-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

exit

!

route-map RM-IBGP-IN permit 10

set local-preference 200

exit

!

route-map RM-IBGP-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

exit

!

ipv6 route 2a06:9801:1c::/48 blackhole

ipv6 route 2a06:9801:1c:fffe::2/128 gif1

!

router bgp 201379

bgp router-id 198.51.100.10

no bgp default ipv4-unicast

neighbor 2001:db8:300::1 remote-as 209533

neighbor 2001:db8:300::1 description Upstream-iFog-Frankfurt

neighbor 2001:db8:300::1 update-source 2001:db8:300::2

neighbor fd00:ca::1 remote-as 209735

neighbor fd00:ca::1 description Upstream-Lagrange-UK

neighbor fd00:ca::1 update-source fd00:ca::2

neighbor 2001:db8:400::1 remote-as 212895

neighbor 2001:db8:400::1 description Upstream-Route64-FRA

neighbor 2001:db8:400::1 update-source 2001:db8:400::2

neighbor 2a06:9801:1c:fffe::2 remote-as 201379

neighbor 2a06:9801:1c:fffe::2 description Edge-Vultr-Frankfurt

neighbor 2a06:9801:1c:fffe::2 update-source 2a06:9801:1c:fffe::1

!

address-family ipv6 unicast

network 2a06:9801:1c::/48

neighbor 2001:db8:300::1 activate

neighbor 2001:db8:300::1 soft-reconfiguration inbound

neighbor 2001:db8:300::1 maximum-prefix 250000 90 restart 30

neighbor 2001:db8:300::1 route-map RM-IFOG-BGPTUNNEL-IN in

neighbor 2001:db8:300::1 route-map RM-IFOG-BGPTUNNEL-OUT out

neighbor fd00:ca::1 activate

neighbor fd00:ca::1 soft-reconfiguration inbound

neighbor fd00:ca::1 maximum-prefix 250000 90 restart 30

neighbor fd00:ca::1 route-map RM-LAGRANGE-IN in

neighbor fd00:ca::1 route-map RM-LAGRANGE-OUT out

neighbor 2001:db8:400::1 activate

neighbor 2001:db8:400::1 soft-reconfiguration inbound

neighbor 2001:db8:400::1 maximum-prefix 250000 90 restart 30

neighbor 2001:db8:400::1 route-map RM-ROUTE64-IN in

neighbor 2001:db8:400::1 route-map RM-ROUTE64-OUT out

neighbor 2a06:9801:1c:fffe::2 activate

neighbor 2a06:9801:1c:fffe::2 soft-reconfiguration inbound

neighbor 2a06:9801:1c:fffe::2 route-map RM-IBGP-IN in

neighbor 2a06:9801:1c:fffe::2 route-map RM-IBGP-OUT out

exit-address-family

exit

Local Preference: The Outbound Traffic Hierarchy

Local-preference (LP) is the primary mechanism for outbound path selection within an AS. BGP evaluates LP before AS-path length, making it the dominant decision factor. The hierarchy here is:

| Neighbor | Local Preference | Role |

|---|---|---|

| vtbgp (iBGP/Vultr) | 200 | Preferred exit |

| iFog BGPTunnel | 150 | Secondary exit |

| Lagrange | 100 (default) | Tertiary |

| route64 | 100 (default) | Tertiary |

With LP 200 on iBGP-learned routes, hobgp prefers to send outbound traffic through vtbgp and into Vultr’s network wherever vtbgp has a route. iFog serves as backup when vtbgp lacks a route or the tunnel is down. Lagrange and route64 act as last resort, carrying traffic to parts of the internet not well-served by the other two.

For inbound traffic - what the rest of the internet chooses - the tool is different: you control what you announce and how attractive you make it. RM-LAGRANGE-OUT adds two extra prepends (set as-path prepend 201379 201379), making our prefix appear three AS-hops away via Lagrange. Most networks see a shorter path via iFog or Vultr and prefer those, demoting Lagrange to a true backup.

A Note on Free Transit

Both iFog’s BGPTunnel.com service and route64.org offer free BGP transit aimed at hobbyists and researchers. BGPTunnel provides a GRE tunnel to iFog’s Frankfurt infrastructure and announces your prefix to their peers. route64 works similarly. Neither carries SLAs suitable for production, but for learning and redundancy in a hobby setup they’re genuinely useful. The community around these services is active and documentation is good.

The Edge Router: vtbgp

The second router runs on Vultr in Frankfurt. Its role is narrow: announce 2a06:9801:1c::/48 into Vultr’s network and pass routing information back to hobgp via iBGP. It does not forward arbitrary internet traffic.

Why Vultr?

Vultr offers a BGP service where customers announce their own prefixes through Vultr’s infrastructure. Vultr’s network (AS20473 for customer connectivity, AS64515 for BGP peering sessions) has good upstream diversity and significant peering at major exchanges. Networks that peer with or transit through Vultr have a direct path to a locally-announced prefix, resulting in measurably shorter routes than if they had to reach across to an independent transit provider.

Network Configuration

hostname="vtbgp"

kern_securelevel_enable="YES"

kern_securelevel="2"

kld_list="if_gif tcpmd5"

# Physical interface (Vultr-assigned)

ifconfig_vtnet0="inet 203.0.113.20/23 -rxcsum -tso"

ifconfig_vtnet0_ipv6="inet6 2001:db8:f480::20/64 -rxcsum6 -tso6 accept_rtadv"

defaultrouter="203.0.113.1"

# GIF tunnel back to core (hobgp)

cloned_interfaces="gif0"

ifconfig_gif0="tunnel 203.0.113.20 198.51.100.10 mtu 1480"

ifconfig_gif0_ipv6="inet6 2a06:9801:1c:fffe::2 2a06:9801:1c:fffe::1 prefixlen 128"

ifconfig_gif0_descr="Uplink-to-hobgp"

# Routing

ipv6_gateway_enable="YES"

ipv6_static_routes="myblock vultrbgp"

ipv6_route_myblock="2a06:9801:1c::/48 -interface gif0"

ipv6_route_vultrbgp="2001:19f0:ffff::1 fe80::1%vtnet0"

# Services

pf_enable="YES"

frr_enable="YES"

sshd_enable="YES"

ipsec_enable="YES"

rtsold_enable="YES"

rtsold_flags="-aF"

Three details stand out:

ipv6_route_myblock points the entire /48 at gif0. Any traffic for our prefix arriving at vtbgp via Vultr’s infrastructure gets forwarded through the tunnel to hobgp, which handles actual routing to downstream servers.

ipv6_route_vultrbgp is a host route to Vultr’s BGP peering address (2001:19f0:ffff::1) via the link-local next-hop. Vultr’s peering address isn’t directly connected - it’s reachable via Vultr’s own routing - so this static route tells the kernel where to send packets destined for it. FRR uses ebgp-multihop 2 to allow the BGP session to traverse that extra hop.

rtsold handles SLAAC for vtbgp’s provider-assigned IPv6 on vtnet0, receiving the gateway via router advertisements.

A reader might wonder how vtbgp reaches the internet for its own traffic - software updates, DNS, the BGP session setup itself - given that it deliberately doesn’t install the 240,000 BGP routes into the kernel. The answer is the IPv4 defaultrouter="203.0.113.1" in rc.conf. vtbgp’s own outbound traffic uses a plain static default route provided by Vultr, completely independent of the BGP routing table. The BGP table exists solely for FRR’s own decision-making and for propagation to hobgp via iBGP.

TCP-MD5 Authentication

Vultr requires TCP-MD5 authentication on BGP sessions. This is decades-old, MD5 is not modern, and it’s standard across the industry - practically every carrier requires it for BGP sessions. It prevents trivial session disruption from third parties who don’t know the shared key.

On FreeBSD, TCP-MD5 is implemented via the IPsec subsystem. The security associations live in /etc/ipsec.conf:

add 2001:db8:f480::20 2001:19f0:ffff::1 tcp 0x1000 -A tcp-md5 "your-shared-secret";

add 2001:19f0:ffff::1 2001:db8:f480::20 tcp 0x1000 -A tcp-md5 "your-shared-secret";

The SPI value (0x1000) is fixed and matches what FRR expects. With ipsec_enable="YES" in rc.conf and the IPsec SAs loaded, FRR picks up the authentication automatically when password is set on the neighbor. The shared secret comes from Vultr’s BGP configuration panel.

FRR Configuration

frr version 10.5.1

frr defaults traditional

hostname vtbgp

log syslog informational

service integrated-vtysh-config

!

ipv6 prefix-list PL-MY-NET seq 5 permit 2a06:9801:1c::/48

! [PL-BOGONS: same bogon filter as hobgp]

!

route-map RM-VULTR-OUT permit 10

match ipv6 address prefix-list PL-MY-NET

exit

!

route-map RM-VULTR-IN permit 10

match ipv6 address prefix-list PL-BOGONS

exit

!

route-map RM-IBGP-OUT permit 10

exit

!

route-map RM-KERNEL-DENY deny 10

exit

!

router bgp 201379

bgp router-id 203.0.113.20

no bgp default ipv4-unicast

neighbor 2001:19f0:ffff::1 remote-as 64515

neighbor 2001:19f0:ffff::1 description Vultr-Core

neighbor 2001:19f0:ffff::1 password your-shared-secret

neighbor 2001:19f0:ffff::1 ebgp-multihop 2

neighbor 2a06:9801:1c:fffe::1 remote-as 201379

neighbor 2a06:9801:1c:fffe::1 description Core-hobgp

neighbor 2a06:9801:1c:fffe::1 update-source 2a06:9801:1c:fffe::2

!

address-family ipv6 unicast

neighbor 2001:19f0:ffff::1 activate

neighbor 2001:19f0:ffff::1 soft-reconfiguration inbound

neighbor 2001:19f0:ffff::1 route-map RM-VULTR-IN in

neighbor 2001:19f0:ffff::1 route-map RM-VULTR-OUT out

neighbor 2a06:9801:1c:fffe::1 activate

neighbor 2a06:9801:1c:fffe::1 next-hop-self

neighbor 2a06:9801:1c:fffe::1 route-map RM-IBGP-OUT out

exit-address-family

exit

!

ipv6 protocol bgp route-map RM-KERNEL-DENY

Two things here deserve careful explanation.

RM-KERNEL-DENY: Keep the Routing Table Clean

ipv6 protocol bgp route-map RM-KERNEL-DENY applies a deny-all route-map to every BGP route before FRR tries to install it into the kernel’s forwarding table. The result: FRR maintains its full BGP table internally (~240,000 routes), but none of those routes appear in the kernel.

This is intentional. vtbgp’s job is to announce our prefix, not to forward arbitrary internet traffic. The only forwarding it does is for traffic arriving destined for 2a06:9801:1c::/48, which is handled by the static route pointing at gif0. Pushing 240,000 routes into the kernel would be wasteful memory consumption with no benefit.

FRR still uses its internal table for its own purposes and still sends routes to hobgp via iBGP - they just never touch the kernel’s FIB on vtbgp.

A note for readers familiar with FRR internals: the standard layer for controlling kernel route installation is Zebra, FRR’s routing daemon that mediates between the protocol daemons (BGP, OSPF, etc.) and the OS. An alternative to RM-KERNEL-DENY is configuring Zebra directly to not install BGP routes into the kernel. Both approaches achieve the same result; RM-KERNEL-DENY intercepts routes at the BGP level before they reach Zebra at all. At this scale the distinction is academic, but Zebra is the canonical control point for RIB-to-FIB separation in production deployments.

next-hop-self: Making iBGP Routes Usable

When vtbgp receives a route from Vultr (next-hop: 2001:19f0:ffff::1) and re-advertises it to hobgp via iBGP, the standard iBGP behavior is to preserve the original next-hop. hobgp can’t reach 2001:19f0:ffff::1 directly - that’s inside Vultr’s network, reachable from vtbgp but not from hobgp.

next-hop-self rewrites the next-hop to vtbgp’s own address (2a06:9801:1c:fffe::2) before sending routes to hobgp. hobgp reaches that address via gif1, so the routes become actionable: “to get to this destination, send traffic down the iBGP tunnel to vtbgp.” Without next-hop-self, hobgp would receive routes with unreachable next-hops and ignore them.

iBGP: Connecting the Two Routers

iBGP (internal BGP) runs between routers in the same AS. It has different semantics from eBGP in two important ways:

First, the split-horizon rule: routes learned via iBGP are not re-advertised to other iBGP peers. For a two-router setup this doesn’t matter - each router hears from the other directly. For larger networks with three or more routers, you’d need route reflection or a full mesh; with two, a single session is a complete solution.

Second, local-preference propagation: LP is carried across iBGP sessions. Routes that vtbgp receives from Vultr carry LP 100 (default) until hobgp’s RM-IBGP-IN overrides them to 200. This means hobgp’s outbound preference for the Vultr path is configured entirely on hobgp - vtbgp doesn’t need to know anything about the preference hierarchy.

The session itself is mechanically simple. On hobgp, vtbgp is a neighbor with remote-as 201379 (our own AS number). FRR recognizes same-AS neighbors as iBGP peers automatically. The session runs over the gif1/gif0 tunnel pair, authenticated by virtue of the tunnel endpoints being within our own address space.

PF on a Transit Router: The Stateless Requirement

Operating a transit router exposes a fundamental tension in stateful packet filtering. On a server, stateful rules are ideal: track TCP connections, match replies to established state, drop unsolicited packets. On a transit router, stateful rules for through-traffic break things.

The reason is asymmetric routing. When hobgp receives a packet on gre0 (via iFog) destined for a host behind gif0, PF creates a state entry associating that flow with gre0. The destination host responds, the packet traverses gif0 back to hobgp, and the best exit path might be gre1 (Lagrange) - different from the arrival interface. PF sees the reply on gre1 with no matching state on that interface and drops it.

This isn’t an edge case. Path asymmetry is the norm on the public internet. A request and its reply routinely traverse different providers, different exchange points, different physical paths.

Request: Client ──> [iFog / gre0] ──> hobgp ──> [gif0] ──> Server

│

Reply: Client <── [Lagrange / gre1] <── hobgp <── [gif0] <──┘

^^^^^^^^^^^^^^^^^^^

PF drops it: state was created on gre0, reply arrives on gre1

The solution is to keep transit traffic stateless:

# Transit traffic: no state, no asymmetry problems

pass in quick inet6 from any to $my_network_v6 no state

pass out quick inet6 from any to $my_network_v6 no state

pass in quick inet6 from $my_network_v6 to any no state

pass out quick inet6 from $my_network_v6 to any no state

These four rules cover all IPv6 traffic to or from 2a06:9801:1c::/48. no state means PF allows the packets without creating any tracking entry. Each packet is evaluated independently on its own merits.

But not everything can be stateless. The router itself makes outbound connections - BGP keepalives, DNS queries, software updates - and those need state tracking to match replies. The control plane (SSH, BGP sessions) also needs state. The solution is a three-tier PF structure where ordering determines which rule wins:

# Tier 1: Control plane - stateful, locked to known peers

pass in quick proto tcp from <bgp_peers> to any port 179 keep state

pass out quick proto tcp from any to <bgp_peers> port 179 keep state

# Tier 2: Router's own traffic - stateful for outbound connectivity

pass in quick inet6 from any to $my_router_ip keep state

pass out quick inet6 from $my_router_ip to any keep state

# Tier 3: Transit traffic - stateless for asymmetric routing

pass in quick inet6 from any to $my_network_v6 no state

pass out quick inet6 from any to $my_network_v6 no state

pass in quick inet6 from $my_network_v6 to any no state

pass out quick inet6 from $my_network_v6 to any no state

PF evaluates quick rules in order and stops at the first match. Traffic to $my_router_ip (2a06:9801:1c::1, the router’s address within our prefix) matches Tier 2 and gets state tracking. Everything else in the /48 falls through to Tier 3 and flows stateless.

NAT for Router-Originated Traffic

There’s a related problem: hobgp’s physical interface has a Hetzner-assigned IPv6 address (2001:db8:1c19::1). When the router sends traffic out a GRE tunnel using that address as the source, it’s sending from an address with no relation to our announced prefix. Transit providers may find this confusing; more practically, return traffic to that address may take a completely different path or simply fail.

The fix is NAT on the GRE tunnel interfaces, translating non-/48 source addresses to the router’s PI address:

nat on gre0 inet6 from ! $my_network_v6 to ! $peer_ifog -> $my_router_ip

nat on gre1 inet6 from ! $my_network_v6 to ! $peer_lagrange -> $my_router_ip

nat on gre2 inet6 from ! $my_network_v6 to ! $peer_route64 -> $my_router_ip

nat on gif1 inet6 from ! $my_network_v6 to any -> $my_router_ip

Reading the first rule: packets exiting gre0, with a source address not in our /48, destined for anywhere other than the iFog BGP peer itself, get their source rewritten to $my_router_ip (2a06:9801:1c::1). The peer exclusion is critical - BGP sessions must use the tunnel link address, not the NATed address, for the session to work. Everything else the router originates - traceroutes, pings, package fetches - appears on the internet as 2a06:9801:1c::1, an address inside our announced prefix.

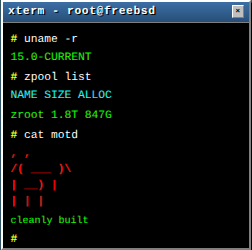

Verification

vtysh -c 'show bgp ipv6 summary' on hobgp shows four sessions in established state:

Neighbor V AS MsgRcvd MsgSent Up/Down State/PfxRcd Desc

2001:db8:300::1 4 209533 1873391 10601 16:21:35 237902 Upstream-iFog-FRA

fd00:ca::1 4 209735 1267858 1771 1d05h26m 239352 Upstream-Lagrange-UK

2001:db8:400::1 4 212895 609199 1771 1d05h26m 238542 Upstream-Route64-FRA

2a06:9801:1c:fffe::2 4 201379 1370565 1771 1d05h26m 229257 Edge-Vultr-Frankfurt

Four peers, three carrying the full DFZ table (~237-239K prefixes each), one iBGP peer with a partial Vultr view (~229K prefixes). All in established state.

The real test is whether different networks actually use different paths. MTR traces from three vantage points answer this directly.

From Deutsche Telekom (heavy Vultr peering):

HOST: client.example.com Loss% Snt Last Avg

...

9.|-- constantcompany-ic-375791.ip.twelve99-cust.net 0.0% 10 25.9 26.7

10.|-- ethernetae1-sr2.fkt3.constant.com 0.0% 10 23.4 29.6

11.|-- 2001:19f0:6c00:154::33 0.0% 10 24.1 23.7

12.|-- vtbgp.example.com 0.0% 10 26.8 26.3

13.|-- hobgp.example.com 0.0% 10 27.1 26.6

14.|-- radon.example.com 0.0% 10 27.9 31.2

15.|-- 2a06:9801:1c:1000::10 0.0% 10 27.1 30.4

Hops 9-11 are Constant Company infrastructure - that’s Vultr’s upstream. The traffic enters via vtbgp (hop 12), crosses the iBGP tunnel to hobgp (hop 13), and reaches the downstream server. The Vultr announcement is doing exactly what it should for traffic from DTAG-connected networks.

From Netcup (via AORTA/Vienna Internet Exchange):

HOST: server.example.com Loss% Snt Last Avg

...

9.|-- 2001:7f8:15e::64 0.0% 10 16.7 16.7

10.|-- 2a0d:5440:1::f 0.0% 10 15.8 15.9

11.|-- hobgp.example.com 0.0% 10 19.9 19.6

12.|-- radon.example.com 0.0% 10 20.7 21.4

13.|-- 2a06:9801:1c:1000::10 0.0% 10 20.8 22.0

Hop 9 (2001:7f8:15e::64) is an internet exchange peering LAN address (2001:7f8::/29 is IANA-allocated IXP space). Hop 10 (2a0d:5440:1::f) belongs to route64 (AS212895). The traffic entered via route64 at an exchange point and arrived directly at hobgp - no vtbgp involved.

From another Hetzner server (Falkenstein):

HOST: server3.example.com Loss% Snt Last Avg

...

8.|-- 2001:7f8:11c:1:0:20:9533:1 0.0% 10 5.5 5.6

9.|-- hobgp.example.com 0.0% 10 9.5 9.6

10.|-- radon.example.com 0.0% 10 10.3 16.4

11.|-- 2a06:9801:1c:1000::10 0.0% 10 10.5 11.3

Hop 8 is a DE-CIX Frankfurt switching address (the 2001:7f8:11c range belongs to DE-CIX). Traffic exits DE-CIX and hits hobgp in single-digit milliseconds - iFog’s Frankfurt presence at the same exchange. The prefix is reachable from Hetzner in under 10ms.

Three different paths, three different entry points. Each path is geometrically shorter for its respective traffic class than routing everything through a single upstream would be.

Meta: The destination address in every trace above -

2a06:9801:1c:1000::10- is this blog. If you’re reading this article over IPv6, you just arrived here via one of those paths.

What This Costs

Running your own IPv6 AS is surprisingly inexpensive. Here are the actual numbers for this setup, with monthly equivalents in euros.

| Item | Actual cost | Monthly (approx. €) |

|---|---|---|

| ASN registration (one-time) | £15 | – |

| ASN annual fee | £54.99/year | €5.50 |

| PA /48 IPv6 block | £7/year | €0.70 |

| Hetzner CX23 (core router, hobgp) | €4.15/month | €4.15 |

| Vultr vc2-1c-1gb (edge router, vtbgp) | $6.00/month | €5.50 |

| Lagrange Cloud transit (100 Mbps) | £2/month | €2.40 |

| iFog BGPTunnel.com (100 Mbps) | Free (non-commercial) | €0 |

| route64.org (200 Mbps) | Free | €0 |

| Vultr BGP | Included in VPS | €0 |

| Total recurring | ≈ €18/month |

That is less than many people pay for a single mid-range VPS - and it buys a redundant multi-homed autonomous system with 1.4 Gbps of available transit capacity.

The reason IPv6-only is so accessible comes down to address space economics. An IPv4 PA block costs thousands of euros per year through a sponsoring LIR and requires documented justification. An IPv6 /48 costs €8. The transit situation is similarly skewed: iFog and route64 offer free GRE-based transit specifically because IPv6-only hobbyists place negligible load on their networks. The free tier is a real service, not a trial.

The Vultr edge router is the largest recurring cost after the ASN fees, and it’s paying for something specific: native BGP peering with Vultr’s infrastructure, which reaches networks that simply don’t have a short path to any other upstream in this setup. If path diversity from Vultr-connected networks isn’t a priority, the edge router is optional and the setup collapses back to a single core router at under €5/month in compute costs.

Lessons Learned

Stateless transit rules are not optional. Getting this wrong produces subtle failures: ICMP works but TCP stalls, small packets flow freely but large ones don’t, connections from one direction succeed while the other direction silently drops. When troubleshooting transit routing on PF, the first question is always whether state tracking is interfering with asymmetric paths.

iBGP between two routers is straightforward. The iBGP full-mesh requirement sounds complicated, but with exactly two routers it’s trivially satisfied by one session on each side. The main configuration concern is next-hop-self, which is required whenever the iBGP peers can’t directly reach the eBGP next-hops learned from upstream.

RM-KERNEL-DENY is useful for announcement-only nodes. A router whose job is to announce a prefix and forward traffic for that prefix back through a tunnel doesn’t need 240,000 kernel routes. Keeping the kernel’s RIB clean saves memory, avoids confusing interactions with static routes, and makes the router’s actual forwarding intent explicit.

Vultr’s BGP service reaches a meaningfully different part of the internet. The DTAG traceroute shows traffic entering via Vultr’s Constant Company upstream - a path that simply doesn’t exist via iFog or route64. The two sets of upstreams complement each other. For traffic where both would work, local-preference makes the decision; for traffic where only one path exists, the choice is made for you.

Free transit providers provide real diversity. BGPTunnel.com, route64.org, and similar services offer transit that’s genuinely usable for learning and redundancy. Three upstreams with different Frankfurt exchange presence means real path diversity without transit fees.

Conclusion

The gap between a single-router BGP setup and a distributed multi-homed network is mostly conceptual, not computational. The same FreeBSD tools - FRR, PF, GIF/GRE tunnels - handle the expanded setup. iBGP adds one session. The third upstream adds another GRE tunnel and two route-maps. The edge router requires TCP-MD5 and a careful decision to keep routes out of the kernel.

What changes is what the setup can do. Three entry points mean inbound traffic takes geometrically shorter paths for a larger portion of the internet. Local-preference makes outbound path selection explicit and tunable. The iBGP architecture means either router can be rebooted without taking down the other’s upstream sessions.

The next logical evolution would be geographic diversity - a PoP in a different region with genuinely independent upstream connectivity. But Frankfurt with three providers and two PoPs is already a substantial improvement over a single machine with one vendor’s connectivity, and the lessons from building it transfer directly to any larger scale.

Each of those traceroutes ends the same way: hop 11 or hop 13 or hop 15, an address in 2a06:9801:1c::/48. The path that gets it there varies by two thousand kilometres and half a dozen autonomous systems, chosen automatically by the routing protocol. That’s what multi-homing looks like when it’s working.

Comments

You can use your Mastodon or other ActivityPub account to comment on this article by replying to the associated post.

Search for the copied link on your Mastodon instance to reply.

Loading comments...