Everybody has a backup strategy. Some people’s backup strategy is “I should really set up backups.” Others involve a cronjob that worked once in 2019 and has been silently failing ever since. A select few involve a USB drive in a desk drawer labeled “IMPORTANT” in permanent marker.

Mine layers ZFS snapshots, off-site replication, a Proxmox Backup Server that’s really just a proxy in front of cheap object storage, a Podman container doing something that would make a packaging engineer cry, and a dead man’s switch that pages me if any of it stops working. It’s not elegant in every layer, but it covers roughly a dozen servers across FreeBSD and Linux, and it lets me sleep at night.

The classic 3-2-1 backup rule says: three copies of your data, on two different media types, with one copy off-site. What I’m about to describe exceeds it in some areas and only meets it in others - but the principle is sound, and if you take nothing else from this article, take 3-2-1.

Before diving into tools, it helps to name the threats each layer addresses:

- Accidental deletion or bad deploys → local ZFS snapshots (instant rollback)

- Disk or pool failure → off-site replication to rsync.net

- Total host loss → remote backups via PBS + S3 and syncoid

- Silent backup failure → dead man’s switch monitoring

- Corrupt or incomplete backups → quarterly restore tests

Every layer below maps to at least one of these. If you’re designing your own strategy, start from your threat model and work outward - the tools are interchangeable, the thinking isn’t.

Table of Contents

Layer 1: ZFS Snapshots with Sanoid (FreeBSD)

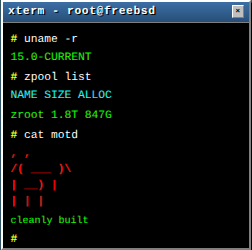

All my FreeBSD servers run ZFS. If you’re on FreeBSD and not using ZFS, I have questions. (If you want answers about ZFS first, I wrote an entire article about it.)

ZFS snapshots are the first line of defense. They’re instant, they’re effectively free at creation time (copy-on-write means a snapshot consumes zero additional space until data changes), and they’ve saved me from “oops” moments more times than I care to admit. But snapshots alone aren’t backups - they live on the same pool as your data. If the disk dies, the snapshots die with it.

Enter sanoid, a policy-driven snapshot management tool. On FreeBSD, it’s a pkg install sanoid away. You define retention policies per dataset, point sanoid at them, and it handles creation and pruning automatically.

Here’s a representative configuration from one of my servers:

# /usr/local/etc/sanoid/sanoid.conf

[template_production]

autosnap = yes

autoprune = yes

# Retention Policy

hourly = 0

daily = 14

weekly = 4

monthly = 0

yearly = 0

[template_database]

autosnap = yes

autoprune = yes

# Retention Policy

hourly = 0

daily = 3

weekly = 0

monthly = 0

yearly = 0

# Apply to datasets

[zroot]

use_template = production

recursive = yes

process_children_only = no

[zroot/postgres_data]

use_template = database

recursive = yes

process_children_only = no

# Exclude datasets that don't need snapshots

[zroot/tmp]

autosnap = no

[zroot/bastille/logs]

autosnap = no

[zroot/bastille/jails/nginx/cache]

autosnap = no

The philosophy is straightforward: production data gets 14 daily snapshots and 4 weekly ones. That’s about six weeks of rollback capability. Database datasets get a shorter window (3 days) because database snapshots without coordinated dumps are crash-consistent at best - useful, but not a replacement for proper application-level backups. Temporary directories and caches get nothing, because snapshotting /tmp is a waste of everyone’s time.

Sanoid runs via cron:

0 0 * * * /usr/local/bin/sanoid --cron

This pattern is identical across all ~10 of my FreeBSD servers. Same templates, same retention policies, same cron entry. The only thing that changes is which datasets exist and which exclusions apply. Consistency is a feature - when you’re managing multiple hosts, you don’t want to remember that this server has different retention because you were feeling creative at 2 AM six months ago.

Layer 2: Off-Site Replication with Syncoid

Local snapshots protect against accidental deletion and bad updates. They do absolutely nothing against disk failure, ransomware, or the catastrophic scenario where the entire server goes away. For that, you need off-site copies.

Syncoid (part of the sanoid suite) handles this. It uses zfs send/recv over SSH to replicate datasets to a remote ZFS pool. The first run sends a full copy; subsequent runs send only incremental differences. For a server with hundreds of gigabytes of data, the nightly incremental transfer is typically measured in megabytes.

I use rsync.net as my off-site target. They offer ZFS-capable storage specifically designed for this use case - you get a remote ZFS pool that accepts zfs recv over SSH. It’s not the cheapest option - but “cheap” and “I trust this with my only off-site copy of everything” are different categories.

Here’s the backup script that runs nightly:

#!/bin/sh

# Configuration

SOURCE_POOL="zroot"

TARGET="backup@ab1234c.rsync.net:data1/apollo/zroot"

MONITOR_URL="https://uptime.example.com/api/push/xxxxxxxxxx?status=up&msg=OK&ping="

echo "Starting off-site backup to rsync.net..."

/usr/local/bin/syncoid --no-sync-snap --no-privilege-elevation --recursive \

--sendoptions="w" \

--exclude="zroot/tmp" \

--exclude="zroot/bastille/logs" \

--exclude="zroot/bastille/jails/nginx/cache" \

--recvoptions="u o canmount=off o mountpoint=none" \

"${SOURCE_POOL}" "${TARGET}"

if [ $? -eq 0 ]; then

echo "Backup successful. Triggering monitor..."

/usr/local/bin/curl -fsS "${MONITOR_URL}"

else

echo "ERROR: Backup FAILED. Monitor NOT triggered."

exit 1

fi

A few things worth noting:

--sendoptions="w" is critical. The w flag means “raw send” - encrypted datasets are sent in their encrypted form. Without this, zfs send would transmit the decrypted data stream, and the datasets would arrive unencrypted at rest on the remote end. With raw send, your encrypted datasets remain encrypted at rest on the backup target. rsync.net never needs your encryption keys, and if someone compromises the backup storage, they get ciphertext.

--no-privilege-elevation means syncoid won’t try to use sudo on the remote end. This works because the remote user (backup in this example) has been granted only the specific ZFS permissions needed to receive snapshots - nothing more.

--recvoptions="u o canmount=off o mountpoint=none" tells the receiving end not to mount the datasets. This is a backup target, not a live system. You don’t want zfs recv trying to mount zroot on the remote machine.

The same excludes from the sanoid config reappear here. Yes, the duplication is intentional - each layer should be independently correct, so if you change one without the other, neither silently breaks. There’s no point in replicating temp files and cache directories across the internet.

The cron schedule runs this at 4 AM, after sanoid has already created the nightly snapshots at midnight:

0 0 * * * /usr/local/bin/sanoid --cron

0 4 * * * /usr/local/bin/daily_backup.sh > /var/log/backup_last.log 2>&1

Retention of old snapshots on the rsync.net side is handled by a separate sanoid cron job running on the rsync.net host itself:

0 5 * * * /usr/local/bin/sanoid --verbose --prune-snapshots

This keeps the remote snapshot history tidy without me having to manage it from every source server.

The SSH Configuration

The backup user connects via a dedicated SSH key with limited permissions:

# ~/.ssh/config (on the source server)

Host rsync_backup

HostName ab1234c.rsync.net

User backup

Port 22

IdentityFile /root/.ssh/id_ed25519_backup

Compression yes

The backup user on rsync.net has only the ZFS permissions required for receiving snapshots. If someone compromises a source server and steals the SSH key, the worst they can do is send snapshots to the backup pool and list existing ones. They can’t delete snapshots, destroy datasets, or access anything outside the designated backup path. This is the ransomware angle: even if an attacker has full root on a source server, they cannot destroy or encrypt the off-site backups.

Layer 3: Proxmox Backup Server for Virtual Machines

Not everything is FreeBSD. I run two Proxmox VE nodes that serve as lab environments for testing things quickly, plus a handful of utility VMs. These need a different backup strategy.

My approach: a Proxmox Backup Server (PBS) instance running on a minimal VM (1 vCPU, 1 GB RAM) at a cheap cloud provider, with Backblaze B2 as the storage backend.

The beauty of this setup is that PBS supports S3-compatible object storage as a datastore. The PBS VM itself is tiny and stores almost nothing locally - it’s essentially a proxy that handles deduplication, encryption, and the Proxmox backup protocol, then shoves the actual data into B2. You get Proxmox’s excellent incremental backup and deduplication without paying for a beefy VM with terabytes of local storage.

On the Proxmox VE side, this is completely transparent. You add the PBS as a storage target in the Proxmox web UI, configure a backup schedule, and the VMs back up nightly through the standard Proxmox backup mechanism. The PVE nodes don’t know or care that B2 is the actual storage behind it - they just see a PBS endpoint.

The economics work out nicely: a tiny VPS to run PBS costs a few euros per month, and B2 storage is $6/TB/month with free egress via the Cloudflare Bandwidth Alliance. For a lab environment with a few hundred gigabytes of VM data, the total cost is negligible.

Layer 4: Linux Servers

Linux servers are the messy middle child of this strategy. There’s no single approach, because the servers themselves vary too much.

Option A: Proxmox Backup Client

For Linux hosts where it’s available, proxmox-backup-client is the cleanest option. It supports file-level and image-level backups to PBS with deduplication and encryption. Install the client, point it at your PBS instance, schedule a cron job, done. These backups land in the same PBS + B2 infrastructure described above.

Option B: Plain rsync

Not every Linux server needs the full PBS treatment. Some are simple workhorses where the important data is just files - configuration, application data, maybe some database exports. For these, rsync over SSH to rsync.net does the job.

The key is what happens before rsync runs. You can’t just rsync a live PostgreSQL data directory and expect a consistent backup. The same goes for MySQL, Redis AOF files, or anything else that keeps state in memory. So the backup script follows a pattern: dump first, then sync.

#!/bin/bash

set -euo pipefail

BACKUP_DIR="/var/backups/pre-rsync"

RSYNC_TARGET="backup@ab1234c.rsync.net:data1/hostname/"

SSH_KEY="/root/.ssh/id_ed25519_backup"

MONITOR_URL="https://uptime.example.com/api/push/xxxxxxxxxx?status=up&msg=OK&ping="

mkdir -p "${BACKUP_DIR}"

# Dump databases before syncing

pg_dump -U postgres myapp > "${BACKUP_DIR}/myapp.sql"

# Or for MySQL:

# mysqldump --single-transaction --all-databases > "${BACKUP_DIR}/all-databases.sql"

# Export container volumes (Podman or Docker)

podman volume export myapp-data > "${BACKUP_DIR}/myapp-data.tar"

# Sync everything to rsync.net

rsync -avz --delete -e "ssh -i ${SSH_KEY}" \

/etc/ "${RSYNC_TARGET}/etc/"

rsync -avz --delete -e "ssh -i ${SSH_KEY}" \

/var/backups/pre-rsync/ "${RSYNC_TARGET}/dumps/"

rsync -avz --delete -e "ssh -i ${SSH_KEY}" \

/opt/app-data/ "${RSYNC_TARGET}/app-data/"

# If we got here, everything succeeded (set -e exits on any failure)

curl -fsS "${MONITOR_URL}"

The database dump ensures you get a consistent, point-in-time export rather than a half-written data directory. podman volume export gives you a clean tarball of container volumes - useful when your application runs in containers and the actual state lives in named volumes rather than bind mounts.

For containers specifically, don’t forget that the compose file or quadlet unit and any environment files are just as important as the volume data. Losing your database is bad; losing your database and the configuration that tells you how it was deployed is worse.

The rsync.net destination is just a directory on a ZFS filesystem, so you get the benefit of rsync.net’s own ZFS snapshots on the remote side. Even if --delete removes a file during sync, the previous version survives in a snapshot. It’s belt and suspenders, and it costs nothing extra.

Boring? Yes. Reliable? Also yes. Sometimes the best backup tool is the one that’s been working since 1996.

Option C: The Podman Trick (When Packages Don’t Exist)

And then there’s the creative part.

I have a RHEL 9 server running Forgejo (a self-hosted Git forge) in Podman containers. This is a production system with SELinux in enforcing mode. I wanted to back it up to my existing PBS infrastructure, but here’s the problem: proxmox-backup-client doesn’t ship as an RPM for RHEL. There’s no package in EPEL, no Copr repo, nothing in the Proxmox repositories for Red Hat-based systems.

The solution? Run proxmox-backup-client inside a Podman container, and pass the entire root filesystem in read-only:

podman run --rm \

--name pbs-backup-job \

--security-opt label=type:spc_t \

--hostname hermes \

--entrypoint /usr/bin/proxmox-backup-client \

--secret pbs_repo_password,type=env,target=PBS_PASSWORD \

--secret pbs_key_passphrase,type=env,target=PBS_ENCRYPTION_PASSWORD \

-v /:/mnt/root:ro \

-v /root/backup.key:/etc/proxmox/backup.key:ro,Z \

docker.io/ayufan/proxmox-backup-server:v3.4.1 \

backup root.pxar:/mnt/root \

--repository backup@pbs@backup-vps.example.com:data \

--keyfile /etc/proxmox/backup.key

Let’s break down why this actually works well:

--security-opt label=type:spc_t is the key ingredient on a SELinux system. The spc_t (super privileged container) type allows the container to read host files that would normally be blocked by SELinux labels. Without this, the container would get a wall of “permission denied” errors trying to read the host filesystem, even though it’s mounted read-only. If you’re running SELinux in enforcing mode (and you should be), this flag is non-negotiable.

-v /:/mnt/root:ro mounts the entire host root filesystem into the container as read-only. The container can see everything but modify nothing. The backup client reads files from /mnt/root and creates a root.pxar archive (Proxmox’s archive format) that gets sent to PBS.

Podman secrets handle the credentials. The PBS repository password and encryption key passphrase are stored as Podman secrets and injected as environment variables - they never appear in the command line, process list, or container inspect output.

--hostname hermes sets the hostname inside the container to match the actual host. PBS uses the hostname to identify backup sources, so this ensures the backups show up correctly in the PBS UI.

The result: a full file-level backup of a RHEL server to Proxmox Backup Server, with encryption, deduplication, and incremental transfers - all without installing a single package on the host. It runs nightly via a systemd timer, and it has been rock-solid.

This is absolutely a workaround, not a vendor-supported path. But it’s contained, repeatable, and has proven stable in practice. Sometimes the ugliest solutions are the most reliable ones.

The Glue: Dead Man’s Switch Monitoring

The most dangerous failure mode for backups isn’t failure. It’s silent failure. The backup that stopped working three months ago and nobody noticed until the restore attempt. By the time you need it, you discover that your last good backup is from February and it’s now April.

This is why every backup script ends with a monitoring ping. I run an Uptime Kuma instance that monitors these pings via push-based checks (sometimes called dead man’s switches or heartbeat monitors). The logic is inverted from normal monitoring: instead of checking whether a service is up, it checks whether a backup has reported in.

Each backup job pings a unique URL on success:

curl -fsS "https://uptime.example.com/api/push/xxxxxxxxxx?status=up&msg=OK&ping="

If the backup fails, the curl never fires. If the backup script doesn’t run at all (cron misconfigured, server down, disk full, whatever), the curl never fires. Uptime Kuma is configured with a 28-hour timeout (24 hours for the daily schedule + 4 hours of tolerance). If 28 hours pass without a ping, I get an alert.

This catches every failure mode: - Backup script crashes → no ping → alert - Cron job stops running → no ping → alert - Server goes offline → no ping → alert - SSH key expires → backup fails → no ping → alert - Remote target is full → backup fails → no ping → alert

And yes - who monitors the monitor? Uptime Kuma itself is monitored by an external service (a simple HTTP check), so if it goes down, I know about that too.

The only thing push monitoring doesn’t catch is a backup that appears to succeed but produces corrupt or incomplete data. For that, you need test restores.

Quarterly Restore Tests

A mentor told me at one of my first jobs: “Untested backups are just expensive hopes and dreams.” That stuck with me, and I take it seriously.

Every quarter, I run full restore tests for every machine I consider production. These are scheduled in my calendar as recurring appointments, and I treat them with the same priority as any other production maintenance window. The process is straightforward: spin up a fresh VM, restore the system entirely from backups, and verify that it comes up in a functionally working state - services running, data intact, applications responding.

I record every restore session with asciinema and document the results in my DokuWiki, with the terminal recording attached. This gives me three things at once: confidence that the backups actually work, regular practice with the restore procedure so I’m not fumbling through it for the first time during an actual emergency, and a living library of documentation I can refer to when things go wrong at 3 AM. Under pressure is the worst possible time to be reading man pages and guessing at flags. Having a recording of yourself successfully restoring the exact same system last quarter is worth more than any runbook.

The quarterly cadence is a compromise. Monthly would be better but hard to justify the time. Yearly would be negligent. Quarterly is frequent enough that procedural drift doesn’t accumulate, and any backup issue gets caught within 90 days at most.

How Restores Actually Work

Since each layer uses different tools, the restore path differs per layer. A quick overview:

- ZFS local snapshots →

zfs rollbackto revert a dataset in place, orzfs cloneto mount a snapshot as a separate dataset for selective file recovery without affecting the live system - Syncoid / rsync.net →

zfs sendfrom the remote pool back to the local host via SSH. Same tool, reverse direction. For a full rebuild: install the OS, create the pool,zfs recvthe datasets - PBS (Proxmox Backup Server) → restore via the PBS web UI or

proxmox-backup-client restoreon the CLI. For VMs on Proxmox VE, the restore is a one-click operation from the PVE interface - Plain rsync → copy the dumps and files back, re-import database dumps with

psqlormysql, re-create container volumes from the tarballs. The most manual path, but also the most transparent

This is exactly what I walk through during the quarterly restore tests. Each layer’s restore procedure is documented and recorded, so I’m not figuring it out under pressure.

Putting It All Together

Here’s the full picture:

┌─────────────────────────────────────────────────────────────┐

│ FreeBSD Servers (~10) │

│ │

│ sanoid (local snapshots) ──► syncoid ──► rsync.net (ZFS) │

│ daily/weekly nightly 5 TB pool │

│ retention encrypted remote prune │

└─────────────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────────────┐

│ Proxmox VE Nodes (2) │

│ │

│ PVE backup schedule ──► PBS VM ──► Backblaze B2 (S3) │

│ nightly 1C/1G deduplicated │

│ incremental proxy encrypted │

└─────────────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────────────┐

│ Linux Servers │

│ │

│ proxmox-backup-client ──► PBS ──► Backblaze B2 │

│ rsync over SSH ──► rsync.net │

│ podman + pbs-client ──► PBS (RHEL/no native package) │

└─────────────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────────────┐

│ Monitoring │

│ │

│ Every backup job ──► Uptime Kuma (push / dead man switch) │

│ 28h timeout ──► alert on silence │

└─────────────────────────────────────────────────────────────┘

What This Costs

One of the most common excuses for not having proper backups is cost. So let’s put actual numbers on this:

| Component | What It Does | Cost |

|---|---|---|

| Scaleway STARDUST1-S (IPv6, 1 GB RAM, 1 vCPU, 10 GB disk) | Runs Proxmox Backup Server | €0.10/month |

| Backblaze B2 object storage | Stores VM backups from PBS | $6/TB/month |

| rsync.net ZFS account (5 TB) | Off-site ZFS replication target for all FreeBSD servers | $60/month |

The Scaleway instance is almost comically cheap. It’s a STARDUST1-S - the smallest instance they offer - and it’s more than enough for PBS, because PBS is doing deduplication and proxying to S3, not storing data locally. Ten cents a month. That’s not a typo.

Backblaze B2 scales linearly with what you store. For a lab environment with a few hundred gigabytes of VM data after deduplication, you’re looking at low single digits per month. Egress is free if you route through Cloudflare’s Bandwidth Alliance, which matters a lot if you ever actually need to restore.

The rsync.net line item looks expensive at first glance - $60/month for 5 TB. But you’re not just buying storage. You get a fully managed FreeBSD virtual host with root access, sitting on top of a tolerant raidz3 pool with ZFS snapshots. rsync.net handles all hardware maintenance, OS patches, upgrades, and provides unlimited support. You can optionally add geo-redundancy. For a managed service with that feature set and reliability, it’s genuinely good value. Try pricing a comparable setup at any other provider where you get a dedicated ZFS pool that accepts zfs recv natively - the options are slim, and they aren’t cheaper.

All in, the entire backup infrastructure for about a dozen servers costs roughly $70/month. That’s less than one hour of emergency consulting when you lose data you can’t recover.

Is it perfect? No. There are things I’d improve: better alerting on partial failures, maybe a dashboard that shows backup age across all hosts at a glance, and more automation around the restore tests. But the fundamentals are solid: every server has local snapshots for fast rollback, every server has off-site copies for disaster recovery, every backup job is monitored, restores are tested quarterly, and the whole thing runs unattended.

The most important property of a backup strategy isn’t that it’s clever. It’s that it’s boring, consistent, and actually running. Fancy architectures that you never finish implementing protect exactly zero bytes of data. A simple cron job that runs every night and phones home when it’s done? That protects everything.

Comments

You can use your Mastodon or other ActivityPub account to comment on this article by replying to the associated post.

Search for the copied link on your Mastodon instance to reply.

Loading comments...