- Tue 12 May 2026

- 17 min read

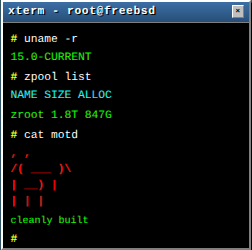

- FreeBSD

- #freebsd, #performance, #monitoring, #troubleshooting, #jails, #racct, #rctl, #sysctl, #network, #observability

“The server feels slow.” It is the most common ticket text in the world, and the least useful. Before you can fix anything you need to know whether the box is CPU bound, memory pressured, waiting on disk, saturating a NIC, or simply running a runaway process in one jail that is starving everyone else. On jail hosts, the second half of the problem is attribution: not just what is overloaded, but which jail is responsible.

FreeBSD ships with an unusually complete troubleshooting toolkit out of the box. This article is a practical tour: how to watch CPU, memory, disk, and network in real time, how to drill from a host-level symptom down to the process responsible, and how to use kern.racct and rctl to both measure and cap what individual jails consume. A short closing section points at jail_exporter for those who want long-term Prometheus data, but the focus here is the in-box troubleshooting toolkit.

This article intentionally sticks to tools present in the FreeBSD base system. There is nothing wrong with htop from ports, but every command below works on a freshly installed host with no extra packages. For the lower-level cases that this toolkit cannot reach, dtrace and pmcstat are the next step, and are the subject of a follow-up article.

Table of Contents

CPU and Load

top

top is the workhorse. A handful of flags turn it from a curiosity into a real diagnostic tool:

# Per-CPU breakdown at the top of the screen

top -P

# Show threads, not just processes (useful for multi-threaded daemons)

top -H

# Add a JID column so you can see which jail each process belongs to

# (available on modern FreeBSD releases; older systems may not have it)

top -j

# Switch to I/O view instead of CPU

top -m io

# Include system (kernel) processes such as idle, intr, geom

top -S

You can combine flags: top -SHPj gives you per-CPU stats, threads, system processes, and a JID column at once, which is what I usually run on a busy jail host.

Most of the answer is usually in the header line:

CPU: 4.2% user, 0.0% nice, 12.7% system, 1.1% interrupt, 82.0% idle

High system plus high interrupt usually means kernel work: network packet processing, ZFS, or a chatty driver. High user is application code. Sustained interrupt time on a small box is often network or storage interrupt processing; vmstat -i shows which IRQ is busy.

uptime, w, and load average

uptime

11:42AM up 87 days, 3:14, 2 users, load averages: 0.84, 0.71, 0.55

Load averages on FreeBSD count runnable plus uninterruptible processes, same as Linux. A 1-minute load far above the 15-minute load means something just started hammering the box; the reverse means whatever it was is winding down. Compare load to hw.ncpu:

sysctl hw.ncpu

hw.ncpu: 8

Sustained load over hw.ncpu is your first sign you should care.

vmstat

vmstat 1 gives a one-second-resolution snapshot of CPU, memory pressure, and context switching:

vmstat 1

procs memory page disks faults cpu

r b w avm fre flt re pi po fr sr ad0 da0 in sy cs us sy id

1 0 0 18G 1.2G 421 0 0 0 612 8 0 2 4k 8k 12k 3 1 96

What to look at:

r(runnable): sustained values abovehw.ncpumean CPU saturationb(blocked): processes sleeping in the kernel waiting on resources, often disk or filesystem I/Opi/po(page in/out from swap): non-zero values mean you are actually paging, which is almost always badcs(context switches per second): unusually high values indicate lock contention or a misbehaving event loop

Pause: systat as a Live Dashboard

Before diving into memory, disk, and network in isolation, it is worth introducing systat. It is a curses-based dashboard built into the base system, with a subview for almost every subsystem covered below:

systat -vmstat 1 # CPU, memory, paging, disk, top processes, all on one screen

systat -iostat 1

systat -ifstat 1

systat -tcp 1

systat -netstat 1

systat -swap 1

systat -pigs 1 # top CPU consumers, condensed

Each section below references the relevant subview. In day-to-day troubleshooting I keep a tmux window with systat -vmstat 1 on the left and gstat -p on the right; the combined view tells you in under five seconds whether the problem is CPU, memory, disk, or network.

Memory

FreeBSD’s memory accounting does not map cleanly to “free” in the Linux sense. The top memory header is the most digestible view:

Mem: 2.1G Active, 5.6G Inact, 1.8G Laundry, 14G Wired, 512M Buf, 1.0G Free

ARC: 8.4G Total, 2.1G MFU, 5.9G MRU, 12K Anon, 102M Header, 312M Other

- Active: in use right now

- Inact: not recently used but still cached. Available for reclaim

- Laundry: dirty pages waiting to be written out before reuse

- Wired: kernel memory that cannot be paged out. On ZFS systems this is often dominated by ARC allocations

- Free: truly free. Usually small on a healthy box, because unused memory is wasted memory

On ZFS systems the Wired number is dominated by the ARC. That is fine. Watch the ARC line for what it is actually caching. The ARC shrinks automatically under memory pressure; you only need to tune vfs.zfs.arc_max if you see a workload starving for RAM while ARC stays huge.

Swap

swapinfo -h

Device 1K-blocks Used Avail Capacity

/dev/gpt/swap0 8388604 142336 8246268 2%

# equivalent

pstat -s

Swap usage on its own is not automatically a problem; FreeBSD will happily leave cold pages on swap indefinitely. What you actually care about is steadily increasing swap usage together with active paging (pi/po in vmstat). When that pattern shows up, use top -o res (sort by resident size) or top -o size (virtual size) to find who is consuming memory.

vmstat -z: UMA zones

When the kernel itself is leaking or oversubscribed, vmstat -z shows per-zone allocations and failures:

vmstat -z | head -1; vmstat -z | sort -t: -k3 -n -r | head

A non-zero FAIL column on a zone (mbuf clusters, vnode, file descriptors) tells you exactly what kernel resource is exhausted. This is the usual diagnostic for “the box stopped accepting connections but CPU is idle.”

Disk I/O

iostat

iostat -x -w 1

The -x form gives extended stats: ops/s, KB/op, ms/op, and percent-busy per device. Anything sustained above roughly 80% busy with high ms/op on a spinning disk, or above 90% on an SSD, is a real bottleneck.

gstat

gstat is the GEOM equivalent and is usually the friendlier tool because it shows every GEOM consumer (partitions, encrypted providers, mirrors) not just raw disks:

gstat -p # only physical providers

gstat -I 1s -f 'ada|nvd' # filter by name, 1-second interval

Watch the %busy column. On modern NVMe you will rarely see it climb; on SATA SSDs and HDDs it is a good first indicator of storage pressure.

ZFS

zpool iostat -v 1

zpool iostat -lq 1 # latency and queue depth per vdev

Per-vdev latency is what tells you whether a degraded mirror or a single slow drive is dragging the pool down. The queue length view (-q) is what catches “the pool is fine on average but tail latency is terrible.”

top -m io

top -m io

Switches top from CPU sort into I/O sort. You see per-process VCSW (voluntary ctx switches), IVCSW, READ, WRITE, FAULT, TOTAL. This is often the first place to look when you need to identify the process hammering the disks.

Network

Interface basics

ifconfig

ifconfig -L # show link-local IPv6

netstat -i # cumulative packet and error counters per interface

netstat -i -h -w 1 # human readable, 1-second deltas

netstat -i -w 1 is usually the quickest check for “is my NIC actually moving packets right now and is it dropping any?” Non-zero Idrop or Odrop columns are bad and worth investigating with sysctl dev.<iface>.

Live throughput

systat -ifstat 1 # per-interface bits/sec, peak, ASCII bar chart

This is the ifstat subview referenced earlier; systat -tcp 1, systat -netstat 1, and systat -ip 1 give live equivalents at the transport and IP layers.

Sockets and connections

sockstat -4 -l # IPv4 listening sockets

sockstat -4 -c # IPv4 connected sockets

sockstat -46 | head # both stacks

sockstat -P tcp # TCP only

sockstat is the FreeBSD answer to lsof -i and ss. It maps every socket back to the owning command, PID, and user, which is usually what you need when chasing “who opened this port.”

For raw connection counts and TCP state distribution:

netstat -an -p tcp | awk 'NR>2 {print $6}' | sort | uniq -c | sort -rn

Lots of TIME_WAIT is normal on a busy server. Lots of CLOSE_WAIT usually means the application is not closing accepted sockets and you have a leak.

Protocol stats

netstat -s -p tcp # TCP protocol counters since boot

netstat -s -p ip # IP layer

netstat -m # mbuf usage, the single most important kernel network counter

netstat -m shows mbuf cluster allocation and, critically, denied/delayed allocations. If those are climbing you are running out of network buffers, which manifests as mysterious connection failures while CPU and link both look idle. This is a reliable signal that gets missed by people who only watch the link layer.

Routes

netstat -rn # routing table, numeric

route -n get default # which gateway will this host actually use

pf: firewall state, rules, and counters

If pf is enabled, a surprising amount of “the network is broken” tickets resolve at the firewall. The base pfctl tool exposes everything you need:

pfctl -si # global info: status, counters, state table size and limit

pfctl -sr -v # active ruleset with per-rule packet and byte counters

pfctl -vvsr # same, plus rule numbers and evaluation/eval-skip counts

pfctl -sn # NAT rules

pfctl -ss # active states (the connection tracking table)

pfctl -ss | wc -l # quick count of states, compare to limit in 'pfctl -si'

pfctl -sm # memory limits (states, src-nodes, frags, tables)

pfctl -s timeouts # current timeout values

pfctl -s Tables # list configured tables

pfctl -t <name> -T show # contents of a single table

The combination of pfctl -vvsr plus pfctl -si answers most “is the firewall counting this traffic at all, and which rule matched?” questions. If a rule’s Evaluations counter is climbing but its Packets counter is not, traffic is reaching pf but matching a different rule.

For validating a config without loading it:

pfctl -nf /etc/pf.conf # parse and check, do not apply

And for surgical interventions:

pfctl -k 198.51.100.7 # kill all states for a host

pfctl -k 198.51.100.7 -k 10.0.0.1 # kill states between two endpoints

pfctl -F state (flush the entire state table) exists but is almost never the right answer on a live host. Use the targeted -k form.

The companion to all of this is pflog, a pseudo-interface that captures packets matched by log-tagged rules:

tcpdump -nettti pflog0 # live view of what pf is logging

tcpdump -nr /var/log/pflog 'host 198.51.100.7' # search the persisted log

Add log to any block or pass rule you want visibility into. pflog itself is lightweight, and rules without the log keyword incur no logging overhead; logging is usually cheap at moderate traffic levels, but high-rate logging rules can become expensive on busy links.

Live network views

systat -tcp 1 # TCP-level activity (states, retransmits)

systat -netstat 1 # active connections, like 'netstat' refreshed live

systat -ip 1 # IP layer counters

Packet capture

tcpdump -ni igb0 -c 100 'tcp port 443'

Quick, surgical packet capture. The -n skips name resolution (always on a busy host) and -c limits the capture so you do not flood the terminal.

Per-Process Inspection

When the high level views point to a single process, drill down with:

# Verbose, wide, with command line

ps -auxww

# Process hierarchy / forest view

ps -axjf

# Structured output for scripting

ps -auxww --libxo json | jq ...

# Threads for a given process

procstat -t <pid>

# Open file descriptors for a PID

procstat -f <pid>

fstat -p <pid>

# Kernel-side stack (what is the process blocked on?)

procstat -k <pid>

# Resource limits in effect

procstat -l <pid>

# Memory map

procstat -v <pid>

procstat -k is particularly useful: if a process is hung, the kernel stack tells you whether it is stuck in nanosleep, kqueue_wait, g_waitfor_event, or somewhere genuinely interesting like a ZFS or VFS call. Requires root privileges on most systems.

Finding Bottlenecks: A Workflow

A repeatable triage flow for “what is using my server”:

- Load and CPU.

uptime, thentop -SHPj. Compare 1-minute load tohw.ncpu. Look at the per-CPU line for hotspots and atsystemvsuservsinterrupt. - Memory. Look at the

topmemory header. IsFreenear zero andInact/Laundrynear zero and swap rising? That is real pressure. Otherwise, “low free memory” is not a problem on FreeBSD. - Disk.

gstat -poriostat -x 1. High%busywith highms/opis the smoking gun. - Network.

netstat -i -w 1for throughput and errors,netstat -mfor buffer exhaustion,sockstat -4 -c | wc -lfor connection counts. - Drill down. Once you know the subsystem, switch

topinto the right mode (-m io) or sort key (-o res,-o size), or useprocstat -k <pid>on suspects. - Jail attribution. On a multi-tenant jail host,

top -jplusrctl -hu jail:<name>(next section) tells you which jail is responsible.

Per-Jail Accounting and Limits with kern.racct

Everything above tells you what the host is doing. On a jail host you often need the same view per jail, both for capacity planning and for catching the noisy neighbour. That is what kern.racct (accounting) and rctl (control) are for.

Enabling racct

RACCT and RCTL are compiled into the GENERIC kernel but disabled at boot, because their per-thread bookkeeping has a small cost. Enable them in /boot/loader.conf:

kern.racct.enable=1

This is a boot-time tunable. A reboot is required; you cannot toggle it at runtime. After reboot:

sysctl kern.racct.enable

kern.racct.enable: 1

Once enabled, accounting applies to every jail automatically. No per-jail opt-in is needed.

Reading per-jail usage

rctl -hu jail:web1

cputime=2h

datasize=0

stacksize=0

coredumpsize=0

memoryuse=412M

memorylocked=0

maxproc=37

openfiles=412

vmemoryuse=1G

pseudoterminals=0

swapuse=0

nthr=58

wallclock=3d

pcpu=12

readbps=0

writebps=0

readiops=0

writeiops=0

-h is human readable; drop it for raw byte/second numbers if you want to script against the output. rctl is the stable user-facing interface to racct data; internally it uses the rctl_get_racct(2) syscall. There is no equivalent sysctl interface despite occasional rumours to the contrary.

Setting limits

rctl rules use the form:

subject:identifier:resource:action=amount

Subjects are process, user, loginclass, or jail. For jails, the identifier is the jail name. Actions you will actually use:

denyblocks further allocation when the limit is hitlogwrites to the system log but does not enforcedevctlsends adevd(8)notification you can hook with custom scripts

Examples:

# Cap one jail at 1 GB resident memory

rctl -a jail:web1:memoryuse:deny=1G

# Cap at 50% of a single CPU (pcpu is percent of one core, not total host CPU,

# so on a 32-core box this is still only half of one core)

rctl -a jail:web1:pcpu:deny=50

# Cap write throughput at 20 MB/s, 2000 iops

rctl -a jail:web1:writebps:deny=20M

rctl -a jail:web1:writeiops:deny=2000

# Cap process count

rctl -a jail:web1:maxproc:deny=200

# Just log when a jail sustains > 100 MB/s read, do not enforce

rctl -a jail:web1:readbps:log=100M

List active rules:

rctl -l jail:web1

Persisting rules

Rules added with rctl -a live until the next reboot. To persist them, put them in /etc/rctl.conf (one rule per line, same syntax) and enable the service:

# /etc/rctl.conf

jail:web1:memoryuse:deny=1G

jail:web1:maxproc:deny=200

jail:web1:vmemoryuse:deny=2G

jail:db1:memoryuse:deny=4G

jail:db1:writeiops:log=5000

sysrc rctl_enable="YES"

service rctl start

service rctl start is a one-shot: it parses rctl.conf and pushes the rules into the kernel. After editing rctl.conf, re-running service rctl start (or restarting the service) re-applies rules from the file.

A practical caution about deny

deny is a hard wall. When a jail hits its memoryuse cap, allocations inside it start returning ENOMEM. Well behaved C programs handle that. Many large runtimes (the JVM, Node.js, some database engines) do not, and will abort or crash instead. Test in staging before deploying memory caps on production jails. For first deployments I usually start with log actions and watch what would have been denied for a week before switching to deny.

Seeing jails in top

top -j

adds a JID column. Combine with -m io to see which jail is doing the disk work. For most “which jail is eating the server?” questions this is enough; no extra tooling required.

A Note on jail_exporter and Prometheus

The tools above give you live, in-the-moment views. If you want history, alerting, and dashboards you will eventually want to feed racct data into Prometheus. The community option is jail_exporter, a small Rust exporter that reads racct and exposes per-jail metrics on an HTTP endpoint (default port 9452, the conventional Prometheus exporter port for the project).

It is available as a port and package:

pkg install jail_exporter

sysrc jail_exporter_enable="YES"

service jail_exporter start

curl -s http://localhost:9452/metrics | grep ^jail_

I will not enumerate the metric set here, because it tracks the upstream README and any list I write would go stale. Read the README, scrape it from Prometheus, and graph whichever jail_racct_* series matter for your workload. Everything in the earlier triage workflow (CPU, memory, I/O, process counts) is exposed.

This is a sidecar to the work above, not a replacement. When something is on fire right now, top -SHPj, gstat, netstat -m, sockstat, and rctl -hu will get you to the answer faster than any dashboard.

Wrap-up

FreeBSD’s monitoring story is not glamorous, but it is unusually complete out of the box. Between top, vmstat, gstat, systat, netstat, sockstat, procstat, and rctl you can usually answer “what is using my server” in a couple of minutes without installing anything. Add kern.racct and a few rctl.conf rules and you also get accurate per-jail attribution and the ability to cap noisy neighbours before they take the host down.

The next article in this series will look at dtrace and pmcstat for the cases where this toolkit is not enough: low level latency analysis, lock contention, and hardware performance counters.

Comments

You can use your Mastodon or other ActivityPub account to comment on this article by replying to the associated post.

Search for the copied link on your Mastodon instance to reply.

Loading comments...