- Fri 13 March 2026

- FreeBSD

- #freebsd, #zfs, #filesystem, #storage, #encryption, #backup, #snapshots, #sanoid

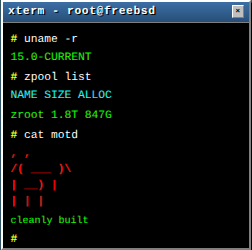

ZFS was born at Sun Microsystems in 2004, open-sourced in 2005 as part of OpenSolaris, and has since become the default filesystem on FreeBSD. Not just the default in the installer - the default in production, the default in the Handbook, the default in the minds of people who have lost data exactly once and decided never again. (It’s also available on Linux, where it works beautifully - just don’t ask me how I know you can run RHEL on a ZFS root pool. That was a crime, not a tutorial.)

This is the second article in the FreeBSD Foundationals series. The first one covered Jails. We’re covering ZFS now because it’s the foundation everything else sits on: your jails, your databases, your mail spools, your backups. Understanding ZFS isn’t optional if you’re running FreeBSD seriously.

Why ZFS Exists

Traditional filesystems trust the hardware. They write data to disk, read it back later, and assume what comes back is what was written. This assumption is wrong more often than most people realize. Disks develop bad sectors. Controllers corrupt data in transit. RAM bit-flips go undetected. RAID controllers with battery-backed caches fail in ways that silently eat data. The industry term for this is silent data corruption, and every filesystem that doesn’t checksum its data is vulnerable to it.

ZFS was designed from the ground up with one overriding principle: your data should never be silently wrong. Every block of data and metadata is checksummed. Every read is verified against that checksum. If the checksum doesn’t match, ZFS knows the data is corrupt - and if redundancy exists (mirror or raidz), it automatically repairs the damage from a good copy without anyone having to notice or intervene.

But ZFS is not just a filesystem. It’s a filesystem, volume manager, and software RAID implementation rolled into one. Traditional Unix storage stacks look like this:

Traditional stack: ZFS stack:

┌────────────────┐ ┌────────────────┐

│ Filesystem │ │ │

│ (ext4/ufs) │ │ ZFS │

├────────────────┤ │ │

│ Volume Manager │ │ (filesystem + │

│ (LVM/gpart) │ │ volume mgmt + │

├────────────────┤ │ RAID + ... │

│ Software RAID │ │ │

│ (gmirror) │ │ │

├────────────────┤ ├────────────────┤

│ Disks │ │ Disks │

└────────────────┘ └────────────────┘

With a traditional stack, you need to coordinate between three or four separate layers, each with its own tools, its own failure modes, and its own ideas about how data is organized. ZFS eliminates this entire class of problems by managing everything from raw disks to individual files in a single, coherent system.

Core Concepts: Pools and Datasets

Pools: The Storage Foundation

A ZFS pool (zpool) is the fundamental unit of storage. You give ZFS one or more disks (or partitions, or files - it doesn’t care), and it creates a pool. All storage in ZFS comes from a pool. There are no partitions to resize, no logical volumes to extend, no separate “allocate space, then format” steps.

# Create a simple pool from a single disk

zpool create tank da0

# Create a mirror (two-way redundancy)

zpool create tank mirror da0 da1

# Create a raidz1 pool (single-parity, like RAID-5)

zpool create tank raidz1 da0 da1 da2

# Create a raidz2 pool (double-parity, like RAID-6)

zpool create tank raidz2 da0 da1 da2 da3

# Check pool status

zpool status tank

Pool topology matters and cannot be changed after creation. You choose your redundancy level when you create the pool. A mirror can tolerate one disk failure per mirror pair. Raidz1 tolerates one disk failure per vdev. Raidz2 tolerates two. Choose raidz2 for anything that matters - modern drives are massive, and resilvering (rebuilding) a 16TB disk takes days of heavy I/O, which is exactly when a second aging drive is most likely to fail. The cost of one extra parity disk is nothing compared to a lost pool.

You can check the health of a pool at any time:

zpool status tank

pool: tank

state: ONLINE

scan: scrub repaired 0B in 02:31:44 with 0 errors on Sun Mar 9 03:01:44 2026

config:

NAME STATE READ WRITE CKSUM

tank ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

da0 ONLINE 0 0 0

da1 ONLINE 0 0 0

errors: No known data errors

The CKSUM column is the one that matters most. A non-zero value there means ZFS detected a checksum mismatch - silent corruption that most other filesystems would have delivered to your application without a word.

Scrubs: Proactive Integrity Verification

ZFS doesn’t just verify data when you read it. You can (and should) run periodic scrubs that read every block in the pool and verify its checksum:

# Start a scrub

zpool scrub tank

# Check scrub progress

zpool status tank

On a redundant pool (mirror or raidz), a scrub will automatically repair any corruption it finds using the redundant copy. On a non-redundant pool, a scrub will detect the corruption and report it, but can’t repair it - there’s no good copy to repair from.

Schedule scrubs regularly. Weekly is common for servers. ZFS automatically pauses scrubs during heavy I/O to avoid degrading application performance, so don’t be afraid to schedule them during the work week if your maintenance windows are limited. FreeBSD enables periodic ZFS scrubs by default via a cron entry in /etc/periodic.conf:

# In /etc/periodic.conf (or check /etc/defaults/periodic.conf)

daily_scrub_zfs_enable="YES"

Datasets: Flexible Storage Without Partitions

A ZFS dataset is roughly analogous to a traditional filesystem, but without fixed size. Datasets share the pool’s storage dynamically. You don’t pre-allocate space to a dataset - it grows as needed, up to whatever the pool (or an optional quota) allows.

# Create datasets

zfs create tank/home

zfs create tank/home/chris

zfs create tank/jails

zfs create tank/jails/mailserver

zfs create tank/postgresql

Datasets are hierarchical. Properties set on a parent are inherited by children unless explicitly overridden. This inheritance model is one of ZFS’s most practical features:

# Set compression on the parent - all children inherit it

zfs set compression=zstd tank

# Override compression for a specific child dataset

zfs set compression=lz4 tank/postgresql

# Check inherited vs. local properties

zfs get compression tank/home

NAME PROPERTY VALUE SOURCE

tank/home compression zstd inherited from tank

Key Dataset Properties

These are the properties you’ll actually care about in practice:

compression

ZFS supports transparent compression at the dataset level. Every block is compressed before being written to disk and decompressed on read. This sounds like it should be slower, but on modern CPUs the compression is so fast that the reduced I/O usually makes things faster overall.

# Modern default - excellent compression ratio, very fast

zfs set compression=zstd tank

# Alternative - slightly faster, slightly worse ratio

zfs set compression=lz4 tank/fast-storage

# Check compression effectiveness

zfs get compressratio tank

There’s almost no reason to leave compression off. Even on data that doesn’t compress well (encrypted files, already-compressed media), ZFS detects incompressible blocks and stores them uncompressed with negligible overhead.

recordsize

The recordsize property controls the maximum block size for a dataset. The default is 128KB, which works well for general-purpose workloads. But specific workloads benefit from tuning this:

# PostgreSQL: match the database page size (8KB)

zfs set recordsize=8k tank/postgresql

# MySQL/InnoDB: match the InnoDB page size (16KB)

zfs set recordsize=16k tank/mysql

# Large sequential files (media, backups, VM images): use 1MB

zfs set recordsize=1M tank/backups

The principle: match the recordsize to the I/O pattern of the application. Databases do many small, random reads and writes at their page size - a 128KB recordsize means ZFS reads 128KB to satisfy an 8KB database page read. Setting recordsize=8k for PostgreSQL can dramatically improve random read performance.

For sequential workloads (backups, media storage, large file copies), a larger recordsize means fewer metadata operations and better compression ratios.

Important: recordsize only affects newly written data. Changing it on an existing dataset does not rewrite existing blocks. For databases, set the recordsize before importing data.

atime

Every time a file is read, Unix traditionally updates its “access time” (atime) metadata. On a busy system, this means every read operation also triggers a write operation. ZFS inherits this behavior by default, but you should almost always disable it:

# Disable access time updates entirely

zfs set atime=off tank

# Or use relative atime (only update when mtime/ctime is newer than atime)

zfs set relatime=on tank

Disabling atime eliminates a write I/O for every read I/O. For a mail server scanning thousands of files per second, or a web server serving static assets, this is a significant performance improvement with essentially no downside. Almost nothing on a modern system depends on accurate atime.

quota and reservation

Quotas limit how much space a dataset can consume. Reservations guarantee a minimum amount of space. These are useful for preventing runaway datasets from starving others:

# Limit a dataset to 50GB

zfs set quota=50G tank/home/chris

# Guarantee 10GB is always available for this dataset

zfs set reservation=10G tank/postgresql

refquota and refreservation are the variants that exclude snapshots from the accounting. Usually refquota is what you want for user quotas - you don’t want someone’s disk usage to silently increase because of snapshots they didn’t take.

Self-Healing: How It Actually Works

ZFS checksums every block of data and metadata using SHA-256 (by default; other algorithms are available). The checksum is stored separately from the data it protects - in the parent block’s metadata. This means corruption of a data block can’t also corrupt its own checksum, which is the fatal flaw of systems that store checksums inline (like a TCP checksum covering the TCP header).

The verification flow:

Write path:

Application data → compress → checksum → write to disk

↓

store checksum in

parent metadata block

Read path:

Read block from disk → verify checksum → decompress → deliver to application

↓ ↓

If checksum fails: On redundant pool:

"This block is bad" → read from mirror/parity

→ repair the bad copy

→ deliver good data

→ increment CKSUM error counter

This happens transparently. Your application never sees the corrupt data. It gets the correct data, repaired silently, and the error is logged. You’ll see it in zpool status output as a non-zero CKSUM count, which tells you a disk is developing problems before it fails catastrophically.

On a non-redundant pool, ZFS can detect corruption but can’t repair it. The read will fail with an I/O error, which is still better than silently returning corrupted data. At least you know the data is bad.

Snapshots: Instant Point-in-Time Copies

A ZFS snapshot is a read-only point-in-time copy of a dataset. Snapshots are essentially free to create - they take no additional space initially, because they share all their data with the live dataset. Space is only consumed as the live dataset changes and the snapshot needs to preserve the old blocks.

# Create a snapshot

zfs snapshot tank/jails/mailserver@before-upgrade

# Create a snapshot of all datasets under a hierarchy

zfs snapshot -r tank/jails@2026-03-13

# List snapshots

zfs list -t snapshot

# See how much space a snapshot is consuming

zfs list -t snapshot -o name,used,refer tank/jails/mailserver

Rolling Back

If something goes wrong, you can roll a dataset back to a snapshot instantly:

# Roll back to the snapshot (destroys all changes since the snapshot)

zfs rollback tank/jails/mailserver@before-upgrade

Note: zfs rollback can only roll back to the most recent snapshot. If you have multiple snapshots and want to go back further, you need to destroy the intermediate snapshots first (or use -r to do it automatically):

# Roll back, destroying intermediate snapshots

zfs rollback -r tank/jails/mailserver@before-upgrade

This makes snapshots invaluable for system upgrades. Snapshot before you upgrade, upgrade, test. If the upgrade broke something, roll back. The entire operation takes seconds, not hours of restoring from backup.

The Beauty of /.zfs: Accessing Snapshots Directly

Here’s something that surprises people who are new to ZFS: every dataset has a hidden .zfs directory at its mount point. Inside it, under .zfs/snapshot/, every snapshot is accessible as a normal read-only directory tree.

# The .zfs directory is hidden from normal ls output

ls -la /tank/home/chris/.zfs/

total 0

dr-xr-xr-x 2 root wheel 2 Mar 13 19:00 .

drwxr-xr-x 5 chris chris 12 Mar 13 19:30 ..

drwxr-xr-x 5 chris chris 12 Mar 10 08:00 snapshot

# List all snapshots as directories

ls /tank/home/chris/.zfs/snapshot/

2026-03-10 2026-03-11 2026-03-12 2026-03-13

# Just... cd into a snapshot and find your file

cd /tank/home/chris/.zfs/snapshot/2026-03-12/

ls Documents/

# → there's the file you accidentally deleted yesterday

cp Documents/important-report.pdf /tank/home/chris/Documents/

No restore tools. No backup software. No waiting. You browse the snapshot like a normal directory, find the file you need, and copy it back. The .zfs directory doesn’t show up in normal ls output (it’s hidden by default), but you can access it directly by name. This means regular users can recover their own accidentally deleted files without root access or administrator intervention.

This is arguably ZFS’s most user-facing killer feature. With automated snapshots (see the sanoid section below), users have a continuously available time machine for their files.

The .zfs directory can be made visible in directory listings if you prefer:

zfs set snapdir=visible tank/home

ZFS Native Encryption

ZFS supports native dataset-level encryption, added in OpenZFS 0.8. Encryption is per-dataset, transparent to applications, and managed entirely through ZFS commands. No separate tools, no dm-crypt, no GELI - just ZFS.

Creating Encrypted Datasets

Encryption is set at dataset creation time and cannot be added to an existing unencrypted dataset (you’d need to create a new encrypted dataset and move the data):

# Create an encrypted dataset with a passphrase

zfs create -o encryption=aes-256-gcm -o keylocation=prompt -o keyformat=passphrase tank/private

# Create an encrypted dataset with a key file

dd if=/dev/random of=/root/keys/tank-private.key bs=32 count=1

zfs create -o encryption=aes-256-gcm \

-o keylocation=file:///root/keys/tank-private.key \

-o keyformat=raw tank/secure

Child datasets inherit encryption from their parent. If tank/private is encrypted, tank/private/documents and tank/private/photos are automatically encrypted with the same key:

# These inherit encryption from tank/private

zfs create tank/private/documents

zfs create tank/private/photos

Key Management

When a system boots, encrypted datasets are not automatically mounted because ZFS doesn’t have the decryption key yet. You need to load the key before the dataset becomes accessible:

# Load the key for a single dataset

zfs load-key tank/private

# Load keys for all encrypted datasets

zfs load-key -a

# Mount after loading key

zfs mount tank/private

# Check encryption status

zfs get keystatus tank/private

NAME PROPERTY VALUE SOURCE

tank/private keystatus available -

For servers that need encrypted datasets available at boot without manual intervention, you can store the key file on a separate device - for example, a USB stick that stays plugged into the server but could be removed and secured independently:

# Key file on a separate USB device mounted at boot

zfs set keylocation=file:///mnt/usbkey/tank-private.key tank/private

If you store the key file on the boot pool itself, be aware that this provides no protection against physical theft of the drive - the thief gets both the encrypted data and the key to decrypt it. The only scenario where a same-disk key file helps is disk disposal or RMA, where you wipe or destroy the boot partition but send the data disks out with encrypted blocks a third party can’t read.

For meaningful data-at-rest protection, either keep the key on a physically separate device, or use passphrase-based keys and accept the manual unlock step at boot.

Changing Keys

You can change the encryption key (or switch between passphrase and key file) without re-encrypting the data:

# Change from key file to passphrase

zfs change-key -o keyformat=passphrase -o keylocation=prompt tank/private

# Change from passphrase to key file

zfs change-key -o keyformat=raw -o keylocation=file:///root/keys/new.key tank/private

This is a key-wrapping operation - the actual data encryption key doesn’t change, only the wrapper protecting it. It’s instantaneous regardless of dataset size.

Locking Datasets

When you’re done working with encrypted data, you can unload the key, making the dataset inaccessible until the key is loaded again:

# Unmount and unload the key

zfs unmount tank/private

zfs unload-key tank/private

ZFS Send/Recv: The Killer Feature for Backups

zfs send serializes a snapshot (or the difference between two snapshots) into a stream of bytes. zfs recv takes that stream and reconstructs the dataset on the other end. This is ZFS’s native backup and replication mechanism, and it’s remarkably powerful.

Basic Send/Recv

# Send a full snapshot to a file

zfs send tank/jails/mailserver@2026-03-13 > /backup/mailserver-2026-03-13.zfs

# Receive it into a different pool

zfs recv backup/jails/mailserver < /backup/mailserver-2026-03-13.zfs

Incremental Sends: Only the Changes

The real power is incremental sends. After the initial full send, subsequent sends only transfer the blocks that changed between two snapshots:

# First: full send of the initial snapshot

zfs send tank/jails/mailserver@monday | \

zfs recv backup/jails/mailserver

# Later: incremental send (only changes since Monday)

zfs send -i tank/jails/mailserver@monday tank/jails/mailserver@tuesday | \

zfs recv backup/jails/mailserver

The -i flag tells ZFS to send only the delta between the two snapshots. On a 500GB dataset where 2GB changed since the last snapshot, the incremental send transfers ~2GB, not 500GB. This makes daily off-site backups of large datasets entirely practical, even over modest network links.

Send/Recv Over SSH

For remote backups, pipe through SSH:

# Send to a remote backup server

zfs send -i tank/jails/mailserver@monday tank/jails/mailserver@tuesday | \

ssh backup-server zfs recv -o mountpoint=none -o canmount=off backup/jails/mailserver

# For initial full replication with all properties

zfs send -R tank/jails/mailserver@tuesday | \

ssh backup-server zfs recv -F -o mountpoint=none -o canmount=off backup/jails/mailserver

The -F flag on the receiving end forces a rollback to match the incoming stream, discarding any local changes on the backup target. This ensures your backup remains an exact replica of the source - but be careful not to use -F against a dataset that contains data you care about independently, as any local modifications will be destroyed.

The -o mountpoint=none -o canmount=off flags are equally important: without them, the backup server will try to mount the incoming datasets at their original mount points. If you’re replicating a dataset with mountpoint=/etc or mountpoint=/var/mail, the backup server would mount it right over its own live directories. Setting these overrides ensures received datasets are stored but never auto-mounted.

Raw Sends for Encrypted Datasets

When sending encrypted datasets, you can choose whether to send the data encrypted or decrypted:

# Raw send: data stays encrypted. Receiver doesn't need the key.

zfs send --raw tank/private@2026-03-13 | \

ssh backup-server zfs recv backup/private

# Normal send: data is decrypted, then re-encrypted (or stored plain) at the receiver.

zfs send tank/private@2026-03-13 | \

ssh backup-server zfs recv backup/private

Raw sends (--raw) are essential for secure off-site backups. The backup server receives and stores encrypted data without ever having the decryption key. If the backup server is compromised, the attacker gets encrypted blocks they can’t read. An important practical benefit: the receiving pool doesn’t need to have encryption support enabled at all - it just stores opaque blocks. This makes raw sends ideal for replicating to older backup servers or cloud storage targets that may not run a current OpenZFS version.

Sanoid and Syncoid: Automated Snapshot Management

Manually creating snapshots and running zfs send works, but it doesn’t scale. Sanoid automates snapshot creation and retention, and its companion tool syncoid automates zfs send/recv replication.

Sanoid is available in FreeBSD’s package repository:

pkg install sanoid

Sanoid uses a configuration file at /usr/local/etc/sanoid/sanoid.conf to define snapshot policies:

[tank/jails]

use_template = production

recursive = yes

[tank/home]

use_template = production

recursive = yes

[tank/postgresql]

use_template = production

[template_production]

hourly = 24

daily = 30

monthly = 12

yearly = 2

autosnap = yes

autoprune = yes

This keeps 24 hourly, 30 daily, 12 monthly, and 2 yearly snapshots - all managed automatically. Old snapshots are pruned when they exceed the retention count. Run sanoid --cron from a cron job (typically every 15 minutes), and snapshot management becomes a solved problem.

Syncoid handles the replication side:

# Replicate a dataset to a remote server

syncoid tank/jails/mailserver backup-server:backup/jails/mailserver

# Replicate recursively (all child datasets)

syncoid -r tank/jails backup-server:backup/jails

# Use raw mode for encrypted datasets

syncoid --sendoptions="w" tank/private backup-server:backup/private

Syncoid automatically determines whether a full or incremental send is needed, creates temporary snapshots for consistency, handles the zfs send | ssh | zfs recv pipeline, and cleans up after itself. A single cron entry running syncoid gives you continuous off-site replication with minimal bandwidth usage.

A typical backup cron setup (shown here as root’s personal crontab via crontab -e; if you add these to /etc/crontab instead, add root as the user field after the time specification):

# Sanoid: manage local snapshots every 15 minutes

*/15 * * * * /usr/local/bin/sanoid --cron

# Syncoid: replicate to backup server daily at 2 AM

0 2 * * * /usr/local/bin/syncoid -r tank backup-server:backup/tank

Performance Tuning: ARC and L2ARC

ZFS uses a sophisticated caching system called the ARC (Adaptive Replacement Cache). The ARC lives in RAM and caches recently and frequently accessed data. Unlike traditional filesystem caches, the ARC uses an algorithm that balances recency and frequency, keeping both “recently used” and “frequently used” data cached.

On FreeBSD, ZFS is greedy by default - it will automatically use most of your system’s RAM for the ARC, leaving only a small reserve for the kernel. On a dedicated file server, this is exactly what you want and no tuning is needed. But if you’re running memory-hungry jails on the same machine (PostgreSQL, application runtimes), you need to restrict the ARC so it doesn’t starve your applications:

# Check current ARC size

sysctl kstat.zfs.misc.arcstats.size

# Restrict ARC to 8GB on a jail host (in /boot/loader.conf)

# Leave the rest for jails and applications

vfs.zfs.arc_max="8589934592"

For systems with an available SSD, L2ARC extends the cache to SSD storage. It’s a second-level cache: data evicted from the RAM-based ARC gets written to the L2ARC device, giving you a much larger effective cache:

# Add an SSD as L2ARC

zpool add tank cache nvd1

L2ARC is most effective when your working set is larger than available RAM but fits on an SSD. For read-heavy workloads on spinning disks (databases, mail servers), an L2ARC can provide dramatic performance improvements.

Warning: L2ARC isn’t free. Every block cached in L2ARC requires an index pointer in your primary ARC (RAM). If you add a massive 1TB NVMe as L2ARC to a system with limited RAM, the pointer overhead will starve the primary ARC and actually degrade overall performance. Size your L2ARC proportionally to your available RAM - not to the size of the SSD you happen to have lying around.

Similarly, a SLOG (Separate Log) device accelerates synchronous writes by providing a fast storage target for the ZFS Intent Log (ZIL):

# Add an SSD as SLOG

zpool add tank log nvd2

This is primarily useful for NFS servers, databases with fsync-heavy workloads, and iSCSI targets where synchronous write performance matters.

ZFS and Jails: Better Together

If you read the first article in this series, you know that Jails and ZFS are a natural pairing. Each jail gets its own dataset, which means:

- Independent snapshots: Snapshot a jail before upgrading its packages. Roll back if something breaks. No impact on other jails.

- Instant cloning: Want a test copy of your production mail jail?

zfs clonecreates one in seconds, sharing data with the original until divergence. - Per-jail quotas: Prevent one jail from consuming all available storage.

- Independent compression and recordsize: A jail running PostgreSQL gets

recordsize=8k. A jail serving static files getsrecordsize=1M. Each workload gets the optimal setting.

# Snapshot a jail before upgrade

zfs snapshot tank/jails/mailserver@pre-upgrade

# Clone a jail for testing

zfs clone tank/jails/mailserver@pre-upgrade tank/jails/mailserver-test

# Set per-jail storage quota

zfs set quota=20G tank/jails/webmail

# Tune recordsize per workload

zfs set recordsize=8k tank/jails/database

Cloning is particularly powerful. A clone is a writable snapshot - it starts sharing all data with the original and only consumes space as you make changes. Standing up a test environment from production data takes seconds, not hours.

Common Pitfalls

Not setting recordsize before importing data: Changing recordsize only affects new writes. If you import a PostgreSQL database and then set recordsize=8k, the existing data keeps the old block size. Set it first.

Forgetting to scrub: ZFS can only repair corruption it knows about. Without regular scrubs, corruption sits undetected until something tries to read the affected block - possibly months later when the redundant copy has also degraded. Scrub weekly.

Letting the pool get too full: ZFS performance degrades as the pool fills up, particularly above 80% capacity. This is because ZFS uses a copy-on-write allocation strategy that becomes increasingly constrained as free space fragments. Monitor pool usage and expand before you hit 80%.

Using dedup without understanding the cost: ZFS supports block-level deduplication, but it requires enormous amounts of RAM for the deduplication table (roughly 5GB of RAM per TB of deduplicated storage). Unless you have a specific, measured use case and the RAM to support it, don’t enable dedup. Use compression instead - it’s nearly free.

Not using raw send for encrypted backups: If you zfs send an encrypted dataset without --raw, the data is decrypted for the send and arrives unencrypted at the receiver. This might be what you want, or it might defeat the entire purpose of encrypting it. Be intentional about which mode you use.

Conclusion

ZFS replaces an entire stack of tools - filesystem, volume manager, RAID, backup - with a single system that checksums everything, heals itself, compresses transparently, encrypts natively, and replicates efficiently. It’s the foundation that makes FreeBSD’s jail-based architecture practical at scale: each jail gets its own dataset with its own properties, snapshots, and quotas.

The features that matter most in practice aren’t the flashy ones. It’s the scrub that catches a degrading disk before data loss. It’s the .zfs directory that lets a user recover a deleted file without filing a ticket. It’s the incremental send that replicates 500GB of jail data by transferring 2GB of changes. These are the things that let you sleep at night.

If you take nothing else away from this article: enable compression, schedule scrubs, automate snapshots with sanoid, and replicate off-site with syncoid. That’s a complete data management strategy in four decisions.

References

- FreeBSD Handbook - The Z File System

- zpool(8) man page

- zfs(8) man page

- OpenZFS Documentation

- Sanoid/Syncoid - Policy-driven snapshot management

- ZFS 101 - Understanding ZFS storage and performance (Ars Technica)

- Jeff Bonwick - ZFS: The Last Word in Filesystems - the original Sun Microsystems presentation

Comments

You can use your Mastodon or other ActivityPub account to comment on this article by replying to the associated post.

Search for the copied link on your Mastodon instance to reply.

Loading comments...